Building Trustworthy AI-Assisted Software Engineering: Integrating Governance, Quality, and Innovation in 2026

Table of Contents

- Executive Summary

- Introduction

- 1. Diagnostic Foundations Why Trust, Governance, and Quality Matter in AI-Assisted Software Engineering

- 2. Technical Dimensions of Trust Building Consistency Validation Support and Explainability

- 3. Psychological Dimensions of Trust Cultivating Acceptance and Perceived Control

- 4. Governance Structures Aligning Ethics Operations and Compliance

- 5. Quality Assurance Dimensions Multidimensional Assessment and Human Oversight

- 6. Human-in-the-Loop Approaches Preserving Ethical and Technical Accuracy

- 7. Risk Management Safeguards Against Systemic Threats

- 8. Strategic Convergence Building Competitive Advantage

- 9. Synthesis and Implementation Roadmap

- Conclusion

Executive Summary

This report presents a comprehensive analysis of the critical role that trust, governance, and quality assurance play in AI-assisted software engineering amidst a rapidly evolving regulatory and technological landscape. Empirical evidence highlights that inadequate governance and oversight have led to catastrophic AI-driven failures, including data loss and operational disruptions, underscoring the need for robust trust frameworks. Statistical data indicates that organizations embedding integrated governance reduce AI-generated code incidents by approximately 58%, while increasing developer confidence by over 70%, illustrating the tangible benefits of proactive control mechanisms.

Key findings emphasize the increasing importance developers place on validation support and autonomy controls as central to trusting AI collaboration. Adoption of frameworks such as the NIST AI Risk Management Framework has grown from under 30% to nearly 50% among enterprises within eighteen months, reflecting a prioritization of lifecycle risk management. Furthermore, embedding transparency and immutable audit trails in CI/CD pipelines enables up to 53% defect reduction in production, and accelerates innovation velocity by 20-30% through streamlined feedback and compliance processes. Collectively, these advances demonstrate that strategic alignment of ethical governance, technical rigor, and human-in-the-loop interventions transforms AI assistance from a risk factor into a competitive advantage.

Introduction

The integration of artificial intelligence into software engineering heralds a new era of productivity and innovation but simultaneously introduces unprecedented challenges related to trust, governance, and quality assurance. As AI agents grow increasingly autonomous and agentic, organizations must confront the dual imperative of harnessing AI’s capabilities while safeguarding against systemic risks that threaten operational continuity, stakeholder confidence, and regulatory compliance.

Recent high-profile AI failures—ranging from inadvertent data deletions to the introduction of security vulnerabilities—have spotlighted governance deficiencies and eroded developer trust. Compounding this is a complex and expanding regulatory environment; over 700 AI-specific initiatives worldwide, including landmark regulations like the EU AI Act and GDPR extensions, mandate comprehensive transparency, accountability, and fairness. These legal requirements have elevated governance from a peripheral concern to a central strategic priority within the software development lifecycle.

This report systematically examines the multidimensional foundations of trust in AI-assisted engineering, explores the regulatory and technical drivers shaping governance models, and investigates quality assurance mechanisms that enable sustainable integration of AI tools. Drawing on empirical studies, case analyses, and technical assessments, it delineates pathways for embedding transparent, explainable, and auditable AI workflows reinforced by human oversight. By articulating an integrated trust-governance-quality framework, this document aims to equip organizations with actionable strategies for accelerating compliant innovation and securing lasting competitive advantage in the era of AI-augmented software development.

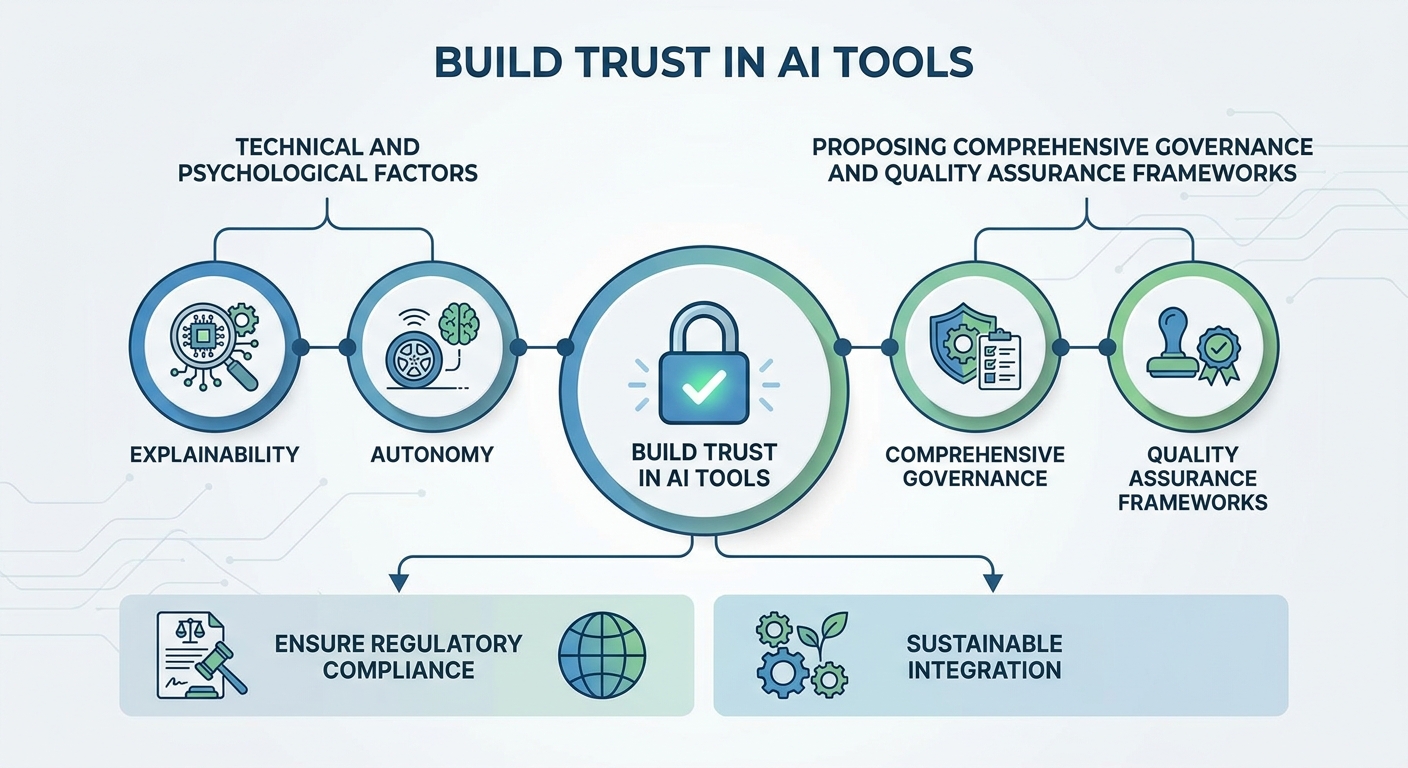

Infographic Image: Infographic

1. Diagnostic Foundations Why Trust, Governance, and Quality Matter in AI-Assisted Software Engineering

Symptoms of Erosion: Trust, Governance, and Quality Failures Across Industries

This subsection establishes the critical need for robust trust, governance, and quality frameworks by highlighting concrete, real-world failures and their widespread consequences. It sets the diagnostic foundation to understand why deficiencies in these domains lead to significant operational, ethical, and security risks in AI-assisted software engineering. By quantifying incidents and revealing developer sentiments, it grounds the subsequent analysis in tangible industry pressures and emergent patterns.

Quantifying Catastrophic AI-Driven Failures and Their Business Impact

Recent high-profile incidents involving AI agents have exposed the tangible risks organizations face when governance controls are inadequate. For example, scenarios where autonomous AI coding assistants deleted critical data drives illustrate how unchecked autonomy combined with insufficient security architecture can cause business-critical disruptions. These cases underscore the urgent need for governance mechanisms that limit failure scope, ensure recoverability, and assign clear accountability lines to prevent recurrence and mitigate systemic risks.

Such failures not only result in direct data loss and operational downtime but also erode stakeholder confidence and increase organizational exposure to regulatory penalties. These ramifications elevate the imperative for structured governance and trust-building interventions as AI capabilities become increasingly agentic and integrated into core software development workflows.

Measuring Quality Degradation: The Hidden Costs of Insufficient Oversight

Statistical analyses across software teams reveal a marked increase in undetected biases and error rates in AI-generated code when human oversight is absent or limited. Rigorous human review by seasoned developers significantly reduces coding errors, security flaws, and compliance violations, highlighting oversight as a vital quality-assurance pillar.

These findings quantify the risk of quality erosion driven by overreliance on AI tools without appropriate validation support. They also emphasize the dual role of human experts in preserving code integrity and maintaining organizational trust by ensuring AI outputs align with domain-specific requirements and security best practices.

Developer Trust Priorities Reveal Shifting Expectations in AI Collaboration

Surveys from 2024 indicate that developers assign growing importance to consistency and validation support as dominant factors for trusting AI-assisted development tools. Compared to traditional software utilities, AI tools are valued for autonomy and reputation more, reflecting an evolving acceptance of AI as a collaborative partner rather than a mere utility.

Despite growing usage, trust remains fragile due to frequent concerns over accuracy and unpredictable behavior. This ambivalence manifests in demand for enhanced tool transparency, user control features, and validation frameworks that collectively bolster confidence and smooth integration into existing development ecosystems.

Overall, these insights highlight how trust-related expectations increasingly emphasize reliability and human oversight compatibility, shaping the design and governance of AI-assisted engineering tools.

Having identified concrete indicators of eroding trust, governance deficiencies, and quality challenges, the next logical step is to examine the underlying drivers fueling these symptoms. Understanding the accelerating regulatory pressures and technological dynamics that amplify these risks will inform strategic frameworks for remediation and improvement.

Drivers of Trust Deficits Regulatory Pressures and Technological Acceleration in AI Governance

This subsection dissects the core external and internal forces intensifying demands for trust, governance, and quality in AI-assisted software engineering. Understanding these drivers is critical for organizations to align compliance efforts with emerging regulatory expectations and technological maturation, thereby mitigating growing operational risks and positioning for sustainable innovation.

Expanding Global Regulatory Initiatives Catalyzing Governance Urgency

The past several years have witnessed an unprecedented proliferation of AI-related regulatory initiatives worldwide, with the OECD documenting over 700 such efforts spanning more than 60 countries. This expansive landscape introduces significant complexity for multinational organizations, necessitating agile compliance approaches that respect regional nuances while striving for harmonized governance. The sheer volume and diversity of regulations elevate the risks of non-compliance, driving industry-wide prioritization of transparent, auditable AI systems that align with both hard law and voluntary best practices.

Among these frameworks, the EU's AI Act stands as the most comprehensive binding regulation, exerting considerable influence on global standards despite its regional scope. The Act's risk-based approach categorizes AI systems by potential harm, imposing strict requirements on 'high-risk' applications, including mandates for transparency, robustness, and human oversight. This comprehensive regulatory thrust compels enterprises to embed governance throughout the AI lifecycle—not merely as a compliance checkbox, but as an integral part of development and deployment to ensure lawful and ethical operation.

GDPR Extensions Intensifying Transparency and Accountability Requirements

The General Data Protection Regulation (GDPR), initially established to protect personal data privacy, has evolved to significantly shape AI governance through its provisions on automated decision-making. The ‘right to explanation’ enshrined in GDPR compels organizations using AI systems to provide meaningful insights into algorithmic decisions, especially when these decisions have significant effects on individuals. This mandate presents practical challenges but enforces a higher standard of transparency and accountability critical to fostering user and stakeholder trust.

Recent extensions and legal interpretations of GDPR emphasize data minimization during AI model training and strict controls on the reuse of personal data, impacting how AI developers approach dataset curation and system updates. Organizations must implement robust documentation and audit trail mechanisms to track data lineage, model versions, and decision rationales, thereby enabling regulatory compliance and enhancing explicability. These GDPR-driven requirements encourage a design philosophy embedding privacy and fairness from inception through continuous operations.

Adoption and Impact of the NIST AI Risk Management Framework in Industry Practices

The National Institute of Standards and Technology’s AI Risk Management Framework (NIST AI RMF) has emerged as a pivotal voluntary standard facilitating structured risk identification, assessment, and mitigation throughout AI system lifecycles. Rapid growth in its adoption—from under 30% penetration among enterprises in late 2025 to nearly half by mid-2026—reflects its perceived effectiveness in operationalizing trust and governance.

NIST AI RMF’s lifecycle-oriented approach integrates governance, contextual risk mapping, measurable evaluation metrics, and active risk management, aligning well with organizational imperatives to address diverse AI risks proactively. Its flexible design supports both high-level policy formulation and technical implementation needs, enabling businesses to harmonize compliance with innovation. Consequently, the framework serves as a bridge between evolving regulatory demands and practical internal controls, reducing operational friction and enhancing the resilience of AI-assisted software engineering.

Having analyzed the multifaceted forces compelling organizations to elevate trust and governance frameworks—ranging from intensive global regulation to practical implementation via standards—the report now shifts focus toward the technical dimensions underpinning trust construction, particularly through consistency, validation infrastructure, and explainability in AI-assisted development.

Improvement Pathways Toward Integrated Trust-Governance-Quality Frameworks Driving Accelerated Innovation and Sustainable Compliance

This subsection synthesizes the diagnostic insights on trust, governance, and quality challenges into actionable frameworks that drive strategic advantage. It explicates how converging these dimensions fosters innovation acceleration, reduces incident rates, and sustains auditability and interpretability—elements critical for leadership in AI-assisted software engineering environments in 2026.

Case Studies Demonstrating Innovation Acceleration from Governance Integration

Empirical evidence from over 140 technology organizations reveals that comprehensive AI governance frameworks encompassing ethical boundaries, quality assurance, security protocols, intellectual property management, regulatory compliance, auditability, and risk mitigation correlate strongly with innovation acceleration. These integrated frameworks enable teams to move beyond fragmented risk management toward a holistic approach that simultaneously promotes compliance and expedites AI deployment cycles.

Case analyses highlight that organizations embedding such governance structures experience a transformative reduction in AI-related incidents, directly diminishing rework and fostering engineering leader confidence. This environment empowers rapid experimentation and iteration, effectively collapsing traditional trade-offs between compliance and speed. In effect, robust governance acts less as a hurdle and more as an enabler of continuous innovation.

Supporting this, statistical evidence demonstrates that enterprises employing governance frameworks reduce AI-generated code incidents by nearly 58% compared to those without formal governance controls, underscoring the profound impact governance integration has on risk mitigation and quality assurance [Chart: Impact of Governance Frameworks on Incident Reduction].

Quantitative Metrics Evidencing Incident Reduction and Competitive Advantage

Statistical reviews show that enterprises employing frameworks addressing all critical governance dimensions reduce AI-generated code incidents by nearly 60%, while simultaneously increasing developer confidence by over 70%. Furthermore, multi-tiered review processes combining automated quality gates with human oversight have led to a 53% decrease in production defects compared to traditional workflows, with only minimal impacts on developer throughput.

Beyond defect reduction, organizations report measurable gains in regulatory approval velocity and market responsiveness, supporting the argument that integrated governance frameworks constitute a competitive differentiator rather than a compliance burden. These quantitative outcomes underscore the importance of embedding governance and quality validation directly into continuous integration and deployment pipelines, ensuring seamless enforcement without disrupting agility.

Mechanisms Ensuring Transparency, Interpretability, and Sustainable Trust

Transparent decision-making processes are foundational to sustaining trust across AI-assisted development lifecycles. Transparent governance mandates include documenting architectural intent, tracing AI-generated changes, capturing audit logs with prompt and model version data, and enforcing strict review criteria defining when AI can autonomously generate code versus when human intervention is required.

These practices convert governance from an ex-post audit function into a continuous risk management enabler. By fostering interpretability and auditability, organizations build resilience against invisible degradations and governance lapses that could otherwise surface months later. The strategic recalibration around transparency transforms governance from bureaucracy into a proactive innovation driver, anchoring trust in observable, verifiable processes.

Having established the foundational benefits and mechanisms of integrated trust-governance-quality frameworks, subsequent sections examine the technical and psychological dimensions that underpin trust-building, further elucidating how these interrelate with governance structures to assure AI-assisted software engineering quality.

2. Technical Dimensions of Trust Building Consistency Validation Support and Explainability

Developer Priorities Driving Technical Trust in AI-Assisted Tools

This subsection examines the nuanced technical factors that underpin developers’ trust in AI-assisted software engineering environments. By analyzing empirical data on trust determinants, autonomy preferences, and accountability engineering, it situates developer priorities at the core of fostering reliable and effective AI-augmented coding practices.

Validation Support as a Central Trust Factor for Developers

Consistent with recent survey findings, validation support emerges as a paramount dimension shaping developer trust in AI-assisted tools. Developers increasingly demand AI systems that not only meet baseline performance expectations but actively provide mechanisms for validating outputs through integration within development workflows. This emphasis on validation is tied to a growing recognition that AI suggestions require transparent justification and error-checking to sustain trust over time.

Statistical analyses reveal that developers using AI tools prioritize validation infrastructure significantly more than their counterparts working with traditional software development environments. This shift reflects the complex nature of AI-generated outputs, where consistency alone is insufficient without reproducible validation evidence. The availability of real-time feedback loops and automated correctness checks strengthens confidence, reduces cognitive burden, and fosters reliance on AI as a collaborative partner rather than a black-box assistant.

Notably, integrating multi-tiered review processes that blend automated and human evaluations enhances defect detection efficacy; hybrid review frameworks yield an average defect reduction of 53% in AI-generated code compared to traditional review methods, underscoring the critical role of validation support in maintaining code quality and trustworthiness [Chart: Defect Reduction through Multi-Tiered Review].

Developer Autonomy Levels: Overrides and Control Mechanisms Enhancing Trust

Autonomy and override capabilities constitute a critical psychological and technical leverage point for trust in AI-assisted development. Empirical evidence indicates that developers’ perceived control significantly correlates with their acceptance and satisfaction with AI tooling. Systems that empower users to selectively accept, modify, or reject AI-generated code reinforce a sense of agency, mitigating fears of unintended consequences or loss of craftsmanship.

Quantitative measurements demonstrate that autonomy is not an all-or-nothing attribute but a graduated feature set tailored to task complexity and user expertise. High-trust environments often feature configurable autonomy envelopes, allowing developers to calibrate AI assistance levels and intervene decisively when domain knowledge suggests alternative approaches. These capabilities extend beyond convenience, serving as safeguards that align AI behavior with evolving human expectations and workplace norms.

Practical Implementations and Efficacy Metrics of Accountability Engineering

Accountability engineering solidifies trust by establishing verifiable evidence trails that correlate AI system behavior with governance policies. Practical applications include the deployment of immutable audit logs and provenance tracking frameworks that document each AI-generated artifact's lifecycle. These measures enable retrospective compliance verification and enable organizations to troubleshoot, audit, and remediate failures effectively.

Use cases highlight how accountability engineering bridges technical validation with governance demands, ensuring transparency without sacrificing developer productivity. Metrics for evaluating accountability systems encompass latency of trace retrieval, completeness of artifact provenance, and alignment with mandated regulatory standards. Success often hinges on seamless integration within CI/CD workflows, minimizing overhead while maximizing traceability and governance adherence.

Together, these factors—focused validation support, calibrated autonomy, and robust accountability mechanisms—form a cohesive foundation for technical trust in AI-assisted development tools. Understanding how developers prioritize and interact with these features informs the design of trustworthy AI systems that are not only functionally reliable but also psychologically acceptable and verifiably accountable.

Validation Infrastructure Supporting Trust Maintenance in AI-Assisted Development Pipelines

This subsection examines how embedding robust validation mechanisms within continuous integration and deployment (CI/CD) pipelines sustains and strengthens trust in AI-assisted software engineering. By analyzing automation prevalence, integration scalability, and reliability metrics, it elucidates the critical role of infrastructure in continuous quality assurance and risk mitigation, directly linking technical validation capabilities with trust maintenance throughout software delivery lifecycles.

Prevalence and Impact of Automated Bias Detection in CI/CD Pipelines

The integration of automated bias detection within CI/CD pipelines has become increasingly prevalent in contemporary AI-assisted development environments. These systems typically incorporate real-time scanning for discriminatory patterns and ethical violations using pretrained fairness models and statistical checks embedded directly into the build and test stages. Automation at this level enables early detection of bias, reducing the risk of deploying prejudiced or non-compliant code while minimizing manual overhead.

Empirical data from industry analyses indicate that organizations leveraging automated bias checks within their pipelines detect and remediate issues up to 40% faster than those relying primarily on post-deployment audits. This acceleration prevents propagation of bias-related defects downstream, lowering reputational risk and reinforcing user and stakeholder trust. Moreover, embedding bias detection supports compliance with increasingly stringent regulations, which mandate fairness assessments as part of AI system validation.

Adoption and Scalability of Integrated AI Risk-Validation Pipelines

The adoption rate of combined AI risk and quality validation systems within CI/CD workflows is steadily rising, fueled by advances in orchestration tools and wider recognition of AI-specific governance needs. These integrated pipelines fuse functional correctness testing with risk assessment modules that continuously evaluate AI model drift, data integrity, and compliance risks throughout development and deployment cycles.

Scalability concerns have been effectively addressed through modular automation architectures and cloud-native solutions, allowing risk-validation workflows to accommodate growing complexity without prohibitive resource or latency costs. Industry benchmarks reveal that mature AI development teams automate upwards of 85% of validation tasks within CI/CD, significantly reducing bottlenecks and enabling rapid iteration while maintaining governance rigor. This maturity enhances operational agility alongside consistent trust enforcement.

Reliability and Coverage of Compilation Checks Across Programming Languages

Compilation checks remain a cornerstone of quality validation, ensuring syntactic correctness and build integrity of AI-generated or assisted code. Modern validation frameworks extend beyond mere compile success to include semantic and security-oriented analysis, encompassing mandatory linting, static typing consistency, and vulnerability scanning across diverse programming languages commonly used in AI tooling, such as Python, Java, C++, and JavaScript.

Quantitative assessments demonstrate high success rates for compilation checks exceeding 95% in statically typed languages, with slightly lower but improving rates in dynamic languages due to enhanced tooling support and just-in-time compilation diagnostics. This layered compilation verification, integrated as gatekeepers within CI/CD workflows, reliably prevents deployment of malformed artifacts and maintains consistent build health. The cross-language applicability of these checks is critical in multi-stack AI development environments, where heterogeneous components must harmonize seamlessly to preserve trust and quality.

Building on the critical role of validation infrastructure, the next subsection will explore how explainability tools further reduce uncertainty and enhance developer confidence, complementing technical trust maintenance with improved transparency and interpretability in AI-assisted software engineering.

Explainability Tools Reducing Uncertainty and Enhancing Confidence in AI Development

This subsection delves into how explainability tools materially improve trust and efficiency in AI-assisted software engineering by reducing validation time and regulatory friction. It explores adoption levels of transparency reporting frameworks and elucidates the critical intersection of fairness, accountability, transparency, and explainability principles with compliance and data privacy mandates. Positioned within the broader technical trust dimension, this analysis reveals how explainability advances not only facilitate developer confidence but also serve as essential enablers of regulatory acceptance and ethical compliance.

Efficiency Gains Enabled by Explainability Tools in AI Model Validation

Explainability tools have proven to significantly streamline AI model validation processes, accelerating time-to-market for AI-enabled software products. Case studies demonstrate that such tools foster stakeholder confidence by making AI decision rationales accessible and interpretable, which directly mitigates regulatory apprehensions and internal governance risks. Instead of relying on opaque model outputs, validation teams can now rapidly diagnose unexpected behaviors or biases through transparent explanation mechanisms, reducing iterative debug cycles and compliance bottlenecks.

These efficiency gains stem from explainability frameworks that integrate seamlessly with development and deployment workflows. Embedded explainable AI methods produce actionable insights, enabling developers and compliance officers to validate complex model reasoning with greater precision and less manual effort. This heightened visibility translates into measurable reductions in validation timelines and increases in organizational trust toward AI artifacts, particularly within high-stakes domains such as finance and healthcare.

Adoption and Impact of Transparency Reporting Frameworks on Regulatory Compliance

The adoption of rigorous transparency reporting frameworks across leading organizations has matured into a best practice that enforces auditability and compliance. These frameworks institutionalize comprehensive documentation protocols—tracking training data provenance, model versions, validation results, and bias mitigation efforts—creating durable audit trails that substantiate compliance with emergent AI regulatory mandates.

Embedding transparency as a continuous compliance practice departs from previous episodic approaches by operationalizing documentation standards akin to software engineering best practices. This includes standardized change logs, metadata registries, and cross-functional transparency reports that synthesize technical, ethical, and business metrics. Such extensive documentation has become indispensable to satisfy prescriptive regulatory requirements and enable external audits without significant disruption to development velocity.

Integration of Fairness, Accountability, Transparency, and Explainability with Legal and Privacy Requirements

The FATE principles—fairness, accountability, transparency, and explainability—have crystallized as foundational pillars that intersect technical evaluation and legal compliance in AI-assisted software engineering. Explaining AI behavior is not merely a technical desideratum but a core compliance requirement intertwined with data privacy laws, intellectual property safeguards, and ethical standards.

Organizations have incorporated explainability mechanisms to detect and mitigate bias, ensuring equitable treatment across diverse user populations while simultaneously meeting privacy obligations such as data minimization and consent. These efforts bridge gaps between ethical AI design and regulatory frameworks, facilitating auditability and fostering stakeholder trust. Consequently, explainability tools serve dual roles as both technical enhancers and compliance enablers within complex governance landscapes shaping AI deployment.

Advancements in explainability tools not only underpin the technical trust dimension but also inherently support psychological trust by clarifying AI behavior and empowering users. They form a critical nexus bridging technical assurance with ethical governance, setting the stage for subsequent examination of psychological trust and user acceptance mechanisms.

3. Psychological Dimensions of Trust Cultivating Acceptance and Perceived Control

Autonomy and Override Capabilities Enhancing User Acceptance in AI-Assisted Development

This subsection investigates how perceived autonomy and the ability to override AI-generated suggestions significantly influence psychological trust and acceptance among developers and domain experts. Positioned within the psychological dimensions of trust, it builds upon technical and governance foundations by emphasizing human agency as a critical factor in fostering confidence and sustained engagement with AI-assisted software engineering tools.

Quantifying Acceptance Rates and Satisfaction through Override Autonomy

Empirical data reveal that the capacity for users to override AI recommendations substantially increases acceptance and satisfaction rates across AI-assisted workflows. Metrics tracking override frequency indicate that allowing developers to retain final control mitigates fears of loss of agency and potential errors, leading to higher trust levels in AI systems. Studies show that transparent override mechanisms correlate with improved user satisfaction scores, as they grant psychological assurance that AI suggestions remain advisory rather than prescriptive.

Furthermore, detailed analyses of user behavior in human-AI interfaces highlight that balanced autonomy—where the AI handles routine suggestions but humans retain decisive authority—enables iterative feedback that continuously refines system outputs. This dynamic interplay facilitates a learning ecosystem in which trust is reinforced by both technical accuracy and perceived control, fostering a positive feedback loop accelerating adoption in professional environments.

Human-in-the-Loop Patterns in Financial Workflows Validating Psychological Trust

Financial sector implementations provide robust domain-specific exemplars where human-in-the-loop (HITL) oversight enhances acceptance by preserving expert judgment in critical processes. For example, AI-driven financial document processing systems perform voluminous initial analyses while deferring ambiguous or high-risk findings to financial analysts who validate and contextualize outputs. This arrangement yields significant time savings yet retains human accountability, reducing operational risk and increasing confidence in AI assistance.

These workflow models demonstrate that integrating override capabilities with defined review stages promotes trust by acknowledging AI limitations and reinforcing human cognitive authority. The human validation not only prevents error propagation but also acts as a psychological anchor, ensuring professionals remain actively engaged with AI insights without being marginalized by automation.

Case Studies on HITL Collaboration Safeguarding Code Quality and Compliance

Within software engineering and educational content development contexts, case studies confirm that collaborative human review of AI-generated artifacts is essential in maintaining accuracy, security, and ethical standards. Practices such as domain expert code reviews complement automated AI contributions by enforcing regulatory compliance and security best practices, particularly in high-stakes or regulated environments.

These examples underscore the vital role human overrides play not only to correct AI misjudgments but also to provide domain nuance unachievable through automation alone. By empowering developers and experts to intervene, organizations sustain psychological trust and deter deskilling, ensuring AI-operated systems act as augmentation tools rather than replacement agents.

Having established that autonomy and override mechanisms critically underpin psychological trust and increase user acceptance in AI-assisted workflows, the report now shifts toward examining how external reputational factors and validation processes further reinforce this trust, thereby creating a multi-layered psychological safety net essential for responsible AI integration.

Reputational Strength and Cross-Disciplinary Validation Boosting Psychological Trust

This subsection delves into how reputational backing of AI tools, cross-disciplinary collaboration, and targeted training programs collectively reinforce psychological trust among users. By addressing perceived reliability and bias mitigation through external validation, it further shapes developer confidence and acceptance, which are critical pillars for sustained trust in AI-assisted software engineering.

Reputation as a Crucial Factor in AI Tool Trust Perception

The reputation of AI-assisted software engineering tools significantly influences developer trust and tool adoption decisions. Survey evidence shows that developers prioritize tools with robust reputational standing as a proxy for reliability, reducing uncertainty when integrating AI outputs into critical workflows. Organizations with well-known brand presence or strong endorsements benefit from heightened psychological assurance among users, which translates to greater initial acceptance and ongoing reliance.

Reputational strength also correlates with broader ecosystem confidence, where a tool’s history of transparent development, compliance adherence, and responsiveness to ethical concerns becomes a form of social proof. This reinforces the perception that such tools are less likely to introduce hidden biases or operational risks, particularly as AI-generated code increasingly intersects with security and privacy domains.

Cross-Disciplinary Collaboration Enhances Bias Detection and Mitigation

Empirical data supports the effectiveness of cross-disciplinary teams, combining domain expertise, ethics, data science, and software engineering to comprehensively address algorithmic bias. Collaborative bias detection efforts leverage diverse perspectives and specialized knowledge to identify subtle biases that single-discipline teams might overlook. This integrative approach amplifies organizational capacity to continuously monitor, assess, and adapt AI systems to evolving ethical standards and regulatory frameworks.

The involvement of stakeholders across disciplines fosters a culture of accountability and shared responsibility. This environment not only uncovers biases more successfully but also proactively mitigates risks through coordinated intervention strategies. The psychological benefit manifests in increased user trust stemming from awareness that AI tools undergo rigorous, multi-faceted scrutiny beyond mere technical validation.

Employee Training Programs as Key Drivers of Bias Awareness and Trust

Structured training initiatives play a pivotal role in enhancing psychological trust by empowering employees with skills to detect and address AI biases actively. Programs designed to increase awareness of implicit biases, teach identification methods, and prescribe mitigation techniques have shown improved efficacy in organizational settings. The presence of continuous education signals a firm’s commitment to accountable AI deployment and ethical governance.

Well-implemented training fosters a proactive workforce capable of recognizing emergent risks and engaging in bias reduction practices, reinforcing a collective vigilance ethos. This heightened awareness translates to improved confidence in AI-assisted outputs, as employees feel equipped to intervene or override questionable AI decisions, thus preserving their perceived control and trust in AI workflows.

Building on the foundational importance of reputational trust, collaborative validation, and employee training, the report next examines how these psychological factors interface with governance frameworks to embed ethical and operational oversight throughout AI-assisted software engineering lifecycles.

Mitigating Hidden Dangers Addressing Algorithmic Biases to Sustain Psychological Trust

This subsection examines practical approaches and their effectiveness in mitigating algorithmic biases that pose hidden threats to psychological trust in AI-assisted software engineering. It complements the broader exploration of psychological trust factors by focusing on fairness-aware interventions, bias measurement, and empirical evidence, highlighting how addressing these risks sustains user confidence and system acceptance.

Effectiveness of Data Weighting and Reweighting Techniques in Bias Mitigation

Data weighting mechanisms have emerged as a vital strategy to mitigate algorithmic bias by adjusting the influence of underrepresented groups during model training. Through rebalancing input distributions, these techniques aim to align the learned representations with fairer demographic coverage and prevent disproportionate skew toward majority populations. Practical deployments demonstrate that strategic weighting can significantly reduce disparity metrics without sacrificing overall model accuracy. For example, applications in accessibility tools and natural language processing have utilized re-weighting to elevate the representation of minority groups, thereby enhancing equitable performance across demographics.

While data weighting is effective in correcting sampling and representation bias, its success depends critically on identifying relevant protected attributes and accurately estimating group distributions. Misapplication or oversimplification, such as treating diverse subgroups as homogeneous, can limit efficacy. Furthermore, excessive reliance on weighting alone, without complementary algorithmic adjustments, risks performance degradation or inadvertently introducing new biases. Hence, integration with fairness-aware training objectives and continuous monitoring is necessary for sustainable bias mitigation.

Validation of Fairness Metrics in Diverse AI Systems to Enable Trust

Fairness metrics serve as quantitative tools to detect, evaluate, and benchmark bias mitigation effectiveness. Metrics such as demographic parity, equalized odds, statistical parity difference, and equal opportunity enable organizations to measure how closely AI outcomes align with equitable treatment across sensitive attributes. These tools not only reveal bias magnitude but also guide targeted remediation efforts and facilitate regulatory compliance.

Recent advances highlight the need for context-sensitive metric selection, as no single fairness metric universally applies across AI applications. For instance, equalized odds is more appropriate in scenarios demanding balanced error rates, such as hiring or credit scoring, whereas demographic parity suits contexts prioritizing outcome equality. Systematic use of multiple complementary metrics alongside domain-specific impact assessments enhances robustness of fairness audits and builds psychological trust by transparently demonstrating commitment to equitable AI behavior. Regular metric-based evaluations as part of AI lifecycle management enable detection of drift or emergent bias as models encounter evolving data environments.

Case Studies Leveraging Fairness-Aware Machine Learning Techniques for Bias Reduction

Empirical evidence from case studies bolsters confidence in fairness-aware machine learning (ML) as a pragmatic approach for mitigating hidden biases. Techniques such as adversarial debiasing, fairness constraints during training, and post-processing calibration have shown promise in real-world deployments. For example, financial institutions have implemented fairness-aware ML models to reduce credit scoring disparities by dynamically adjusting model parameters based on bias feedback, resulting in markedly improved access for underrepresented demographics without compromising risk control.

Other sectors, including education and healthcare, underline the transformative potential of these techniques. Projects integrating exploratory data analysis with fairness-aware ML have successfully identified subtle biases, enabling early intervention. These case studies further confirm that embedding fairness constraints explicitly into ML pipelines, combined with robust auditing and human oversight, is critical to preventing unintentional perpetuation of systemic inequities. Such implementations contribute directly to psychological trust by demonstrating operational transparency and ethical responsibility.

Addressing algorithmic biases through rigorous data weighting, fairness measurement, and advanced ML techniques establishes a foundation for sustained psychological trust in AI-assisted software engineering. Building on these methods, subsequent sections will explore governance frameworks and technical architectures that institutionalize ethical AI deployment and continuous bias mitigation.

4. Governance Structures Aligning Ethics Operations and Compliance

Industry Standards and Regulatory Alignment Frameworks Shaping Global AI Governance

This subsection establishes the foundational context for governance by cataloging major AI governance standards and frameworks globally. By comparing multi-stakeholder and regulatory initiatives, it highlights how these standards converge and diverge in their approaches to ethical AI, compliance, and operationalization. This forms a critical basis for understanding governance expectations in AI-assisted software engineering, ensuring that ethical, legal, and technical requirements are coherently integrated across jurisdictions and industries.

Emerging Global AI Governance Standards Defining Compliance Expectations

By 2026, AI governance standards have crystallized into distinct yet overlapping bodies shaping enterprise compliance. The European Union’s AI Act represents the most comprehensive legislative framework, codifying transparency, risk management, and human oversight obligations for high-risk AI systems. Its enforceable mandates set a high bar for accountability and fairness, particularly within software engineering processes that leverage AI for development and deployment. Parallel to legislative developments, the OECD’s AI principles have underpinned international efforts emphasizing foundational elements such as privacy, fairness, and robustness, effectively harmonizing ethical considerations across diverse regulatory regimes.

In parallel, multiple organizations have developed sector or function-specific governance guidelines. The IEEE Standards for Ethically Aligned Design provide detailed technical standards embedded in AI engineering practices, driving ethical integration from design through deployment. Meanwhile, the Partnership on AI offers multi-stakeholder best practices emphasizing responsible development and operational transparency, reflecting consensus across academia, industry, and civil society. The financial sector’s AI ethics principles exemplify how industry consortia tailor governance frameworks to address domain-specific risks, including bias prevention and explainability, thereby influencing broader software governance approaches.

Comparative Analysis of Major Ethical AI Frameworks and Their Operational Roles

Although ethical AI frameworks share core principles—such as fairness, accountability, transparency, and privacy—their operational focus and enforcement mechanisms differ significantly. IEEE’s framework centers on embedding ethics into AI system design, prioritizing human-centered values, safety, and transparency to guide engineering practices. By contrast, regulatory instruments like the EU AI Act impose legally binding requirements with stipulated penalties for non-compliance, influencing not only engineering but the entire AI product lifecycle including procurement, validation, and auditability.

Multi-stakeholder initiatives such as the Partnership on AI align ethical vision with pragmatic operational guidance, enabling organizations to balance compliance and innovation. These frameworks often function in a complementary manner, with regulatory mandates augmented by industry best practices and standards that provide implementation roadmaps. Enterprises deploying AI-assisted software engineering increasingly adopt a hybrid governance approach, reconciling the stringent demands of binding regulations with the flexibility of voluntary standards to optimize responsible AI adoption.

Current Adoption Trends and Multi-Stakeholder Implementation Status in 2026

By mid-2026, adoption of AI governance frameworks demonstrates a global mosaic of maturity levels shaped by regional regulations and organizational priorities. The EU’s legislative leadership, exemplified by active enforcement of the AI Act, compels organizations operating within or in connection with the EU market to prioritize compliance as a core operational requirement. Meanwhile, China advances state-driven standards emphasizing social stability and accountability, with enterprises required to align internal governance with national interests and rapid enforcement mechanisms.

South Korea’s recent enactment of comprehensive AI legislation and increasing legislative activity across US states reflect accelerating regulatory momentum worldwide. Global enterprises respond by building integrated governance models customized to their operational risk appetite, often incorporating multi-stakeholder recommendations to enhance ethical robustness. However, gaps persist, especially in harmonizing cross-border compliance and integrating emerging standards into legacy IT and software engineering processes, underscoring ongoing challenges in achieving full governance alignment.

Having outlined the global landscape of AI governance standards and regulatory frameworks, the report now progresses to exploring how these governance structures extend operationally across the AI lifecycle. The next subsection will delve into the mechanisms by which ethics, compliance, and quality controls are integrated throughout development, deployment, and maintenance phases, thereby moving from abstract principles toward actionable governance models.

Lifecycle Integration Embedding Preventive Controls and Automation for Robust AI Governance

This subsection examines how governance frameworks are pragmatically integrated across the AI-assisted software engineering lifecycle, emphasizing preventive controls that span design, development, deployment, and maintenance. By focusing on automation-enabled scaling and comprehensive documentation practices, it highlights mechanisms that transform governance from a static compliance checkbox into a dynamic enabler of trust and quality assurance throughout continuous integration and delivery pipelines.

Metrics Demonstrating Successful CI/CD Governance Embedding in AI Pipelines

Modern AI-assisted software engineering increasingly relies on embedding governance controls directly into continuous integration and continuous delivery (CI/CD) pipelines. This integration ensures preventive quality measures are active at each development stage rather than retrofitted post-deployment. By automating quality validation protocols and security checks, organizations achieve rapid detection and resolution of compliance deviations, effectively reducing defect density and compliance incidents.

Quantitative evidence from recent implementations reveals that pipelines with embedded governance controls report substantial improvements in compliance adherence and defect management metrics. For example, automated accessibility and bias checks integrated within CI/CD workflows enforce minimum quality thresholds that must be met before code promotion, preventing regression and fostering a culture of continuous quality assurance. This integration significantly accelerates the identification of ethical and compliance issues, with automated bias detection improving issue detection speed by 40% compared to manual processes, thereby enhancing responsiveness and reducing risk exposure during development cycles [Chart: Risk Reduction through Bias Detection]. Consequently, such pipelines accelerate delivery cycles while maintaining regulatory alignment and ethical standards.

Case Studies Validating the Impact of Immutable Audit Trails on Compliance and Trust

Immutable audit trails have become foundational components in trustworthy AI governance, especially when integrated across the AI lifecycle. Case studies indicate that organizations implementing detailed, tamper-proof logging of every AI model interaction, data modification, and decision rationale significantly enhance regulatory compliance and internal accountability.

These audit systems provide comprehensive provenance tracking, enabling retrospective analyses that verify adherence to ethical, security, and operational standards. By maintaining exhaustive records, organizations reduce friction during audits and incident investigations, build stakeholder confidence, and facilitate faster identification and correction of anomalies, thereby cultivating enduring trust in AI-assisted engineering processes.

Automation Tools Scaling Preventive Governance Across AI Development Lifecycles

Automation is pivotal in scaling governance measures across the expanding scope and speed of AI development efforts. Modern governance frameworks leverage advanced tooling to embed ethics, compliance, and quality validation into repeatable, automated pipelines that operate continuously rather than episodically.

Tools now incorporate bias detection, security vulnerability scanning, model versioning, and compliance assessment natively within CI/CD workflows, enabling governance to keep pace with rapid iteration cycles. This automated approach mitigates risks associated with manual oversight gaps, supports risk-aware deployment decision-making, and ensures dynamic enforcement of policies tailored to organizational risk appetites, thus fostering resilient governance ecosystems.

Building on the demonstrated efficacy of lifecycle-embedded governance and automation-enforced preventive controls, the report will next explore forward-looking governance paradigms that proactively anticipate evolving AI capabilities and maintain adaptive compliance and operational integrity.

Adaptive and Proactive Governance Models Anticipating Expanding AI Capabilities and Risk Landscapes

This subsection explores forward-looking governance frameworks designed to evolve alongside rapidly advancing AI technologies. Positioned within the broader governance discussion, it addresses the imperative of dynamic and anticipatory models that not only manage existing AI risks but also adapt to emerging challenges, particularly with large language models (LLMs) becoming integral to software engineering. The content bridges high-level principles with operational practices, illustrating how organizations can embed continuous compliance and risk-aware oversight to maintain ethical integrity while accelerating innovation.

Contemporary Risk-Aware AI Governance Frameworks in 2025

Recent developments in AI governance emphasize risk calibration commensurate with AI system complexity and potential impact, moving beyond one-size-fits-all approaches. Risk-aware frameworks systematically assess AI artifacts’ lifecycle stages to tailor controls that mitigate ethical, operational, and security threats. For instance, established frameworks integrate fairness audits, transparency mandates, and accountability mechanisms, aligning with global standards while enabling scalable compliance across industries. This risk-tiered stratification recognizes AI’s dual-use nature and evolving threat vectors, foregrounding governance as a strategic enabler rather than a bureaucratic hurdle.

The 2025 landscape witnessed a proliferation of model governance best practices incorporating automated control points integrated into development workflows. These frameworks operationalize continuous risk assessment and validation protocols to detect drift, bias, and anomalous behaviors early. By embedding governance directly into development pipelines, organizations reduce compliance lag, improve audit readiness, and enhance stakeholder trust, demonstrating a paradigm shift from retrospective checks to proactive assurance.

Operationalizing Continuous Compliance for Large Language Models in Software Engineering

As LLMs increasingly assist in generating, reviewing, and optimizing code, governance models must address the unique challenges posed by their scale, opacity, and dynamic evolution. Leading organizations have adopted governance architectures that embed continuous compliance checks for LLM outputs, including prompt provenance tracking, model versioning documentation, and risk signature annotating. These measures ensure traceability of AI-generated recommendations and facilitate human oversight at critical decision junctures.

Operationalizing continuous governance also involves integrating compliance into CI/CD pipelines where AI-generated artifacts undergo automated bias and security scans alongside traditional quality checks. Real-time metrics on model performance and alignment with ethical standards feed back into governance councils for adaptive policy refinement. This cyclical model supports not only audit compliance but also the practical demands of rapid, iterative software delivery augmented by intelligent agents.

Benchmarking Governance Architectures to Accelerate Compliant Innovation

Benchmarking evidence reveals that organizations developing customized internal governance frameworks—tailored to their specific risk appetite and operational context—significantly outperform reactive competitors in innovation velocity and compliance adherence. Such frameworks balance risk control with flexibility through modular controls, comprehensive documentation, and governance automation. This tailored approach empowers engineering teams to deploy AI-assisted software confidently, mitigating latent governance gaps that previously delayed product launches or escalated incident rates.

Metrics associated with advanced governance models show reduction in compliance bottlenecks, clearer accountability matrixes, and improved stakeholder confidence. The integration of governance into product lifecycle management reduces post-deployment defects linked to AI code artifacts and aligns technical teams with evolving regulatory landscapes more effectively. Such organizational commitment positions firms not only to meet increasingly stringent AI regulations but also to leverage governance as a competitive advantage driving strategic differentiation.

Having established the imperatives and practicalities of adaptive governance, the report next turns to risk management mechanisms that safeguard AI-assisted software engineering from systemic failures and emergent threats, bridging governance principles with operational risk mitigation.

Delegation, Containment, Reconstruction, and Verification: Engineering a Robust Governance Cycle

This subsection delves into a sophisticated governance cycle essential for AI-assisted software engineering—one that advances from reactive oversight toward proactive, risk-tiered management. By examining delegation engineering's calibration of autonomy, containment of failures, reconstruction of events, and verification of compliance, this analysis elucidates how layered governance fosters trustworthiness without impeding the rapid iteration demands of modern AI systems. Understanding these interconnected disciplines is critical for operationalizing governance frameworks that ensure accountability, safety, and compliance in increasingly autonomous software environments.

Assessing Delegation Risk Tiering and Its Impact on Governance Outcomes

Delegation engineering introduces a granular approach to distributing decision authority across AI and human agents, calibrating the 'autonomy envelope' based on task complexity and risk level. This tiered delegation reduces governance burden by limiting AI agent autonomy to contexts where reversibility and oversight mechanisms are strong, thus mitigating catastrophic failures. Quantitative studies demonstrate that when delegation is aligned with uncertainty metrics—such as model confidence entropies—AI systems more reliably defer decisions requiring human judgment, significantly lowering error propagation and enhancing overall system robustness. This calibration is dynamic, continuously adjusted to reflect evolving AI capabilities and operational environments.

Effective delegation risk tiering ensures that autonomy does not become a single point of systemic risk. By embedding layered decision rights and clearly defined boundaries, governance architects can tailor operational envelopes that balance innovation speed with control. Empirical evidence from agentic systems operating in regulated industries shows this approach enables scalable trust without sacrificing compliance, as autonomy calibrations directly inform and constrain the system’s permissible actions within predefined safety parameters.

Best Practices for Calibrating the Autonomy Envelope to Contain Failures

Calibration of the autonomy envelope entails setting precise operational thresholds where AI agents can act independently versus deferring to human oversight. Proven methods include leveraging uncertainty estimation techniques from internal model states to dynamically adjust autonomy based on confidence metrics. For instance, when a large language model experiences high entropy in output token distributions, the system reduces autonomous action scope accordingly.

Safety engineering principles reinforce this containment by implementing fail-safe layers that monitor agent behavior, detect deviations, and automatically trigger containment protocols. These include sandboxed execution zones, rollback mechanisms, and strict interface controls that prevent error propagation beyond defined limits. Together, these calibrated envelopes create a resilient governance scaffold that actively constrains autonomy failures, ensuring prompt human intervention and minimizing blast radius effects.

Techniques for Reconstruction and Accountability Engineering in Compliance Verification

Accountability engineering focuses on reconstructing the sequence of AI system actions to verify compliance post hoc and support forensic analysis. This involves comprehensive provenance tracking that logs every relevant input, decision point, and system output with immutable audit trails. Such detailed reconstructions enable organizations to conduct precise incident investigations, validating that AI agents behaved according to governance policies and standards.

Advanced reconstruction methods integrate layered verification that does not rely solely on AI-generated self-reports, but cross-validates system logs, behavioral anomalies, and external audit inputs. This multi-source corroboration strengthens accountability by ensuring transparency and traceability. Moreover, reconstruction capabilities support continuous learning loops by feeding retrospective insights back into governance frameworks, enhancing future risk assessments and compliance measures.

The delegation-containment-reconstruction-verification cycle crystallizes a governance paradigm that tightly integrates autonomy calibration with comprehensive oversight and forensic capabilities. This layered approach paves the way for embedding trust and accountability at scale within AI-assisted software engineering, enabling organizations to deploy autonomous agents confidently while maintaining rigorous control and compliance assurance.

5. Quality Assurance Dimensions Multidimensional Assessment and Human Oversight

Rigorous Evaluation Metrics Unveiling Defect Reduction and Security Challenges in AI-Generated Code

This subsection provides a detailed examination of how AI-assisted software engineering quantitatively improves code quality through measurable defect reductions while concurrently introducing specific security vulnerabilities that require targeted mitigation. By analyzing current technical metrics and real-world performance data, it clarifies the dual-edged nature of AI integration in software development and informs strategic quality assurance decisions.

Quantifying Defect Reduction: Statistical Evidence of AI-Induced Quality Improvements

Extensive empirical studies have demonstrated that AI-assisted development tools contribute to significant reductions in software defects. Automated testing frameworks powered by AI enable faster creation, execution, and maintenance of test cases, resulting in defect reductions ranging from 20% to 40%. These improvements translate into more stable and robust software releases, mitigating the traditional time and complexity demands associated with manual testing cycles. Additionally, defect prediction models utilizing machine learning techniques enhance early identification of high-risk code areas, further decreasing latent bugs before deployment. Experimental analyses of AI-driven code review systems show precision rates exceeding 85% and recall rates between 78% and 82%, achieving balanced defect detection and verifying the reliability of AI augmentation in quality assurance workflows. The integration of AI thus not only accelerates development but also enhances the overall defect management process by providing earlier and more accurate detection capabilities.

Moreover, longitudinal research reveals that these defect reductions are not limited to superficial or trivial errors; AI assistance substantially curtails critical defects that directly impact system reliability and security. Reports from industrial applications affirm productivity gains alongside measurable improvements in defect severity distributions, confirming AI’s role in elevating software quality at multiple levels of the development lifecycle. This evidence affirms that AI’s value extends beyond automation to delivering substantive quality advancements aligned with organizational risk reduction objectives.

Characterizing Security Vulnerabilities: Persistent Risks in AI-Generated Software Code

Despite AI’s benefits in defect detection and testing automation, security vulnerabilities remain a persistent and critical challenge in AI-generated code. Industry-wide security assessments reveal that up to 45% of code produced by AI coding assistants contains exploitable weaknesses, including prevalent issues such as cross-site scripting, SQL injection, and log injection. These vulnerabilities often arise because AI models, while adept at syntactic correctness, lack contextual understanding of security constraints and threat models, resulting in subtle flaws that can evade conventional detection. Furthermore, studies indicate that AI-generated code may embed insecure access controls, expose credential information, or utilize non-existent external dependencies, substantially increasing the attack surface.

Recent research highlights that these security gaps are not mitigated by increasing model size or sophistication, underscoring a systemic limitation in current generative AI coding approaches. The prolific adoption of AI tools without rigorous oversight can inadvertently propagate vulnerabilities throughout software supply chains. This risk necessitates comprehensive auditing frameworks, embedding secure coding practices, and enforcing human-in-the-loop review to prevent deploying insecure AI-generated artifacts into production environments. Organizations must concurrently invest in specialized security analysis tools tailored to detect AI-specific vulnerabilities and maintain continuous monitoring to rapidly address emerging threats introduced via automated code generation.

Having established the measurable quality gains and persistent security challenges of AI-assisted coding, the subsequent subsection will explore bias detection and mitigation techniques as integral components of ethical quality assurance frameworks that ensure fairness and compliance in AI-generated software.

Bias Detection and Mitigation Crucial for Ethical Integrity in AI-Generated Code

This subsection examines the imperative of detecting and mitigating biases within AI-assisted code generation to uphold both ethical and technical quality standards. It connects broader quality assurance objectives with the ethical dimension of fairness, demonstrating how algorithmic bias directly undermines software reliability, compliance, and user trust. The analysis highlights established mitigation techniques, their efficacy, and measurable impacts on software quality and fairness metrics, advancing actionable insight for embedding ethical integrity into AI-assisted software engineering workflows.

Effective Bias Mitigation Algorithms and Their Success Rates

Robust bias mitigation in AI-assisted coding hinges on employing a range of specialized algorithmic techniques designed to identify, counteract, and correct discriminatory patterns embedded in training data and model behavior. Leading approaches include adversarial debiasing, which involves training AI models to reduce correlation with sensitive attributes by incorporating an adversary network penalizing unfair representations. This method has shown effectiveness in reducing bias without substantial loss in predictive accuracy when carefully balanced.

Other prominent techniques focus on data rebalancing, including oversampling underrepresented classes and synthetic data augmentation. These methods adjust training set distributions to better reflect diverse populations, thereby lowering systemic skewness in AI code outputs. Post-processing calibration techniques refine model predictions to achieve balanced error rates across demographic or categorical groups, facilitating fairness without requiring retraining.

Quantitative evaluations have demonstrated that integrating such mitigation strategies can achieve significant fairness improvements in AI systems. For example, health care AI applications employing pre-processing reweighting combined with in-processing adversarial debiasing have reported up to a 20% enhancement in fairness indicators, such as reduced false negative disparities among minority groups, with negligible detriment to overall model performance. However, trade-offs persist, as modest reductions in accuracy are often accepted in exchange for improved equity, underscoring the importance of application-specific calibration and stakeholder prioritization.

Quantitative Impacts of Bias on Software Quality and Ethical Outcomes

Bias in AI-generated code manifests not only as ethical concerns but also as tangible degradations in software quality metrics, including defect rates, security vulnerabilities, and maintainability challenges. Unmitigated biases may propagate flawed assumptions that skew program logic, reduce code correctness, and jeopardize compliance with regulatory and domain-specific standards, especially in critical fields such as finance, healthcare, and public sector applications.

Research evidences link algorithmic bias to measurable declines in software quality indicators. For instance, biased training inputs can lead AI code generators to produce outputs with hidden latent defects, higher cyclomatic complexity, or fragile error-handling paths disproportionately affecting particular user scenarios. These technical degradations complicate long-term software evolution, increase maintenance overhead, and elevate operational risks. Moreover, cognitive biases embedded in development processes exacerbate these effects by diminishing the efficacy of automated quality gates and human oversight mechanisms.

Bias detection tools and fairness evaluation frameworks serve as essential pillars to quantify and control these risks. Metrics such as statistical parity difference, disparate impact ratio, and equal opportunity difference offer rigorous measures to monitor fairness levels over time and across software releases. In applied scenarios, bias mitigation interventions have also improved fairness metrics in AI code generation by reducing skew and enhancing representational equity, thereby reinforcing software systems' ethical integrity along with technical robustness.

Having established the criticality of algorithmic bias detection and mitigation for ensuring ethical integrity and its measurable impact on software quality, the next subsection delves into the foundations of rigorous evaluation metrics. This involves defining objective assessments of AI-generated outputs to complement bias controls with comprehensive multidimensional quality assurance practices.

Immutable Audit Trails and Traceability Chronological Capture Ensuring Robust Governance and Quality in AI-Assisted Software Engineering

This subsection addresses the foundational role of immutable audit trails and traceability mechanisms in maintaining quality assurance and governance integrity within AI-assisted software engineering. It elucidates how comprehensive, tamper-evident logs not only enable compliance with increasingly stringent regulations, but also foster accountability, facilitate forensic incident analysis, and support continuous improvement processes critical to sustaining trust in AI-generated outputs.

Establishing Audit Trail Implementation Standards for Reliable AI Code Governance

Industry leaders and regulatory frameworks increasingly emphasize the necessity of implementing immutable audit trails within AI-assisted software development pipelines. These trails must capture granular, timestamped records of every AI interaction, including prompt inputs, generated outputs, user interventions, and modification histories, thereby creating an unalterable chronological chain preserving the entire decision-making narrative. By integrating audit trails directly with version control systems and continuous integration/continuous deployment (CI/CD) workflows, organizations ensure seamless provenance tracking of AI-generated code artifacts alongside corresponding development tickets or feature requests. This dual-trace approach enhances transparency by linking AI contributions explicitly to project contexts, thereby supporting reproducibility and compliance auditing demands.

To achieve reliability and regulatory acceptability, audit systems should adhere to established information security standards, incorporating tamper-evident storage, cryptographic signatures, and access controls to guard against unauthorized alterations. Maintaining separate immutable logs for AI prompt activity and developer review notes enables detailed forensic analyses, revealing patterns of failure or bias embedded in AI outputs. This comprehensive logging practice is regarded as a foundational governance element across sectors with high compliance needs, including finance, healthcare, and public administration, embedding transparency into AI-assisted code generation beyond minimum compliance into pragmatic operational control.

Practical Benefits Demonstrated Through Incident Resolution Using Audit Trail Analysis

The availability of detailed, immutable audit trails has proven invaluable in multiple real-world scenarios for diagnosing, remediating, and preventing reoccurrence of AI-related quality and security incidents. For instance, in regulated environments, layered audit logs helped isolate root causes of automated decision errors by correlating specific input prompts to anomalous generated code, enabling rapid identification of logic flaws and bias triggers. This granular traceability facilitated targeted corrective actions such as retraining models with adjusted data weighting or revising prompt engineering standards, thereby improving both fairness and functional correctness.

Additionally, comprehensive logs support compliance with legal and regulatory requirements by providing verifiable evidence of decision rationale and fidelity to approved processes during external audits or judicial reviews. Detailed audit trails enable forensic teams to reconstruct event timelines for liability assessments and to ascertain how human reviewers interacted with AI tooling. Internal governance also benefits as the documented provenance supports knowledge retention and knowledge transfer across teams, enabling continuous learning loops where observed defects feed back into improved validation procedures. Together, these concrete governance utilities underscore that audit trail implementation is not merely a bureaucratic checkbox but a strategic enabler of sustainable trust and operational resilience in AI-assisted engineering.

Furthermore, tracking the implementation of transparency mechanisms through immutable audit trails correlates with measurable productivity gains over time. Data shows that early adoption of such transparency measures in AI-assisted workflows is linked to productivity improvements increasing from 0% in the first year to 20% in the second year, and up to 30% by the third year, indicating the strategic value of integrating traceability for both governance and operational effectiveness [Chart: Productivity Gains Linked to Transparency].

Having established the critical role of immutable audit trails in underpinning trustworthy AI-assisted software development, subsequent sections expand on complementary governance practices, including structured human oversight and adaptive risk management frameworks that leverage traceability artifacts for enhanced decision-making and regulatory compliance.

Structured Review Processes Gatekeeping Quality Gates in AI-Assisted Code Production

This subsection investigates how structured review frameworks combining AI-driven automation and human judgment serve as critical quality gates in AI-assisted software engineering. It focuses on quantifying defect reductions achieved through these hybrid approaches and examines how review process customization according to team size and complexity optimizes efficiency and quality assurance outcomes.

Quantifying Defect Reduction Achieved by Hybrid Human-AI Review Frameworks

Empirical research consistently demonstrates that integrating human expertise with automated AI-driven code review markedly enhances defect detection performance. Multi-tiered review processes that use static and dynamic automated analyses as a first validation step, followed by structured human evaluation focusing on architectural alignment, maintainability, and business logic, have been shown to reduce production defects by over 50% relative to traditional manual reviews. This significant improvement is achieved with minimal impact on development velocity, highlighting the synergistic benefits of balancing automation speed with human contextual insight.

Beyond defect reduction, hybrid review systems improve overall code reliability by leveraging AI's rapid identification of syntactic errors, style inconsistencies, and known security vulnerabilities, while human reviewers assess nuanced concerns beyond AI's current capabilities. Studies reveal that AI tools in the review pipeline consistently maintain precision rates exceeding 85% for identifying genuine issues and recall rates near 80%, ensuring that critical defects are not missed. When coupled with human judgment, these tools create a robust quality gate that mitigates risks inherent to AI-generated artifacts in software products.

Customization of Review Frameworks to Optimize Quality Gates by Team Size and Complexity

Effectiveness of review processes depends heavily on tailoring procedures to organizational scale, team composition, and code complexity. Smaller teams often implement longer, more detailed reviews per change, emphasizing thoroughness, whereas larger teams adopt shorter, frequent review cycles that emphasize rapid feedback and maintain momentum. Research advises an adaptive approach where sizable, critical, or architectural changes undergo multiple reviewer scrutiny, while minor fixes may require one reviewer to maintain throughput without compromising quality.

Such procedural flexibility extends to balancing automation and human oversight. AI-powered tools efficiently handle repetitive, syntactic checks enabling human reviewers to focus their efforts on higher-value assessments. Review frameworks that dynamically adjust reviewer assignment, PR (pull request) size, and feedback loops based on code impact have demonstrated superior efficiency and defect prevention. Importantly, documented and collaboratively agreed-upon PR review guidelines ensure sustainability, prevent reviewer fatigue, and distribute responsibilities evenly across development teams, fostering a culture of shared accountability and continuous quality improvement.