AI-Powered Semiconductor Renaissance: AMD's Strategic Ascent Amid Market Rally

Table of Contents

- Executive Summary

- Introduction

- 1. AI-Powered Semiconductor Renaissance: AMD's Strategic Ascent Amid Market Rally

- 2. AMD's Dual-Frontier Strategy: From Gaming Legacy to AI Infrastructure Powerhouse

- 3. Competitive Dynamics and Market Share Trajectory in the AI Chip Arena

- 4. Future Scenarios and Strategic Imperatives for Stakeholders

- 5. Conclusion: Charting a Course for AI-First Semiconductor Leadership

- Conclusion

Executive Summary

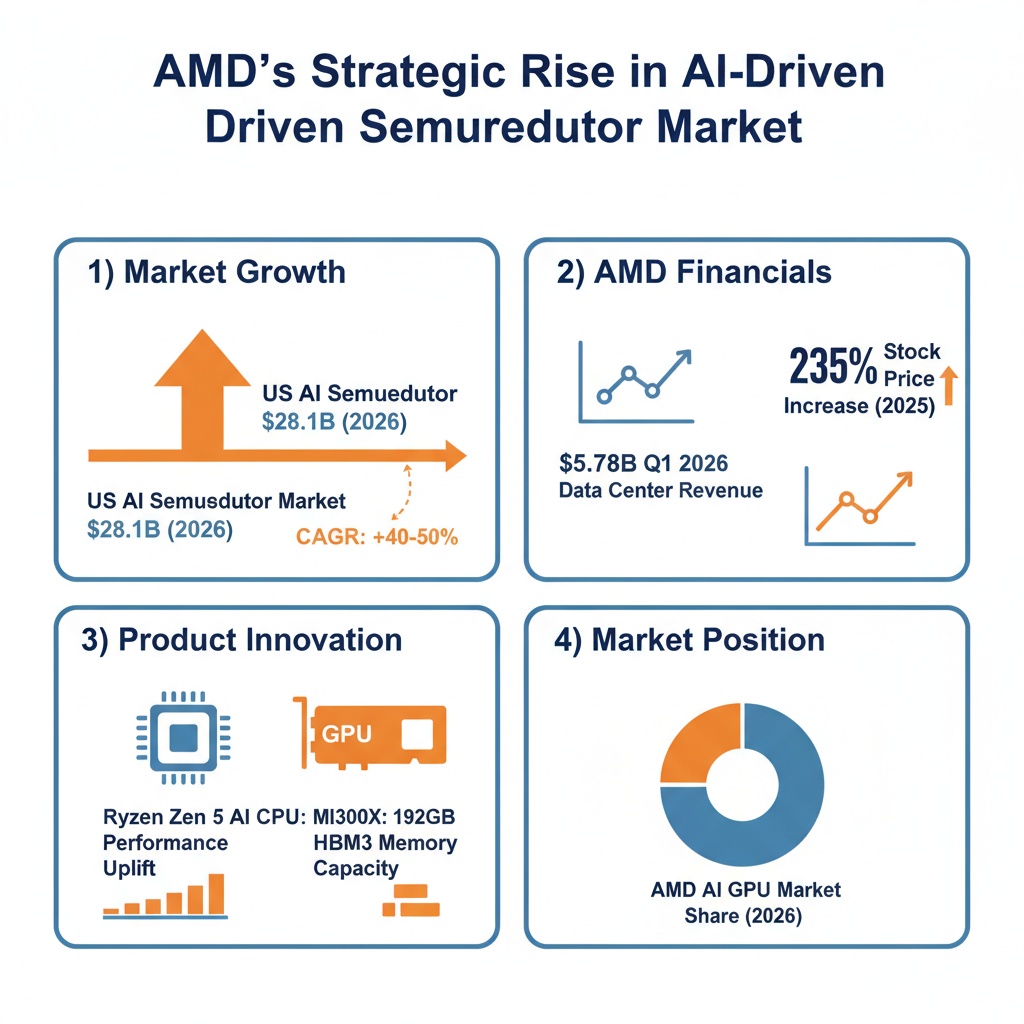

The semiconductor industry is undergoing a transformative AI-driven expansion, with the U.S. AI semiconductor market projected to surge from $28.1 billion in 2026 to nearly $150 billion by 2031, growing at a compound annual rate of approximately 40%. This unprecedented growth is underpinned by hyperscale data center investments, edge computing proliferation, and specialized AI workloads shifting chip architecture demands.

AMD has capitalized on this momentum through technological innovation embodied in its Zen 5 CPUs and RDNA 4 GPUs, alongside strategic partnerships such as the multi-gigawatt AI infrastructure deal with Meta. In Q1 2026, AMD reported revenues of $10.25 billion, with its data center segment contributing $5.78 billion, demonstrating year-over-year growth exceeding 40% and marking a decisive pivot towards enterprise AI markets. Despite Nvidia’s dominant market share of approximately 77% in AI wafer consumption, AMD’s focused design wins and evolving product roadmap have propelled a robust 235% stock rally since 2024, underpinned by expanding ecosystems and improved operational margins.

Introduction

The global semiconductor landscape is experiencing a renaissance driven by artificial intelligence (AI), reshaping market dynamics and technological priorities. This surge is characterized by intensive capital expenditure in AI infrastructure, rapid innovation in chip design, and shifting consumer and enterprise demands converging on AI compute capabilities. Within this context, AMD's strategic repositioning presents a noteworthy case of transformation from a historically gaming-centric firm to a formidable AI infrastructure contender.

Infographic Image: AMD’s Strategic Rise in the AI-Driven Semiconductor Market

Recent market valuations reveal that the U.S. AI semiconductor segment alone is on track to nearly quintuple over five years, catalyzed by the proliferation of large language models, generative AI applications, and autonomous systems. The growth trajectory compresses traditional semiconductor cycle timelines, emphasizing the urgency for players to adapt swiftly to AI’s computational requirements.

This report aims to dissect AMD’s ascent amid this AI semiconductor boom by analyzing technological advancements, financial performance, competitive positioning, and strategic partnerships. Further, it contextualizes AMD’s journey within broader industry trends, including the enduring dominance of Nvidia, emerging challenges from legacy competitors such as Intel, and evolving regulatory and geopolitical landscapes. The scope encompasses assessments of current market share, product innovation, investor sentiment, and future growth scenarios critical to stakeholders navigating a rapidly shifting AI-powered semiconductor sector.

1. AI-Powered Semiconductor Renaissance: AMD's Strategic Ascent Amid Market Rally

Global Semiconductor Market Entering AI-Driven Expansion Phase

This subsection establishes the overarching market context underpinning AMD’s strategic ascent by detailing the transformative impact of artificial intelligence on the semiconductor industry. It elucidates how AI adoption catalyzes unprecedented growth momentum, thereby framing the scale and urgency of sector-wide developments that enhance AMD’s market opportunities and competitive positioning.

US AI Semiconductor Market Projected to Reach $149.8 Billion by 2031

The United States AI semiconductor market is undergoing explosive expansion, with forecasts projecting growth from approximately $28.1 billion in 2026 to nearly $150 billion by 2031. This trajectory corresponds to an extraordinary compound annual growth rate of nearly 40%, underscoring the sector’s centrality to the nation’s digital infrastructure and technological competitiveness. The surge aligns with increasing deployment of AI-optimized semiconductor architectures tailored for large language models, cloud computing, autonomous systems, and advanced analytics, which require massive parallel computational capacity.

This market growth is fueled by hyperscale data centers, edge computing devices, and AI-enabled industrial systems demanding specialized processors capable of efficiently executing complex AI algorithms at scale. Concurrent government initiatives supporting domestic manufacturing capacity, supply chain resilience, and R&D investments further bolster this growth environment, positioning the United States as a key innovator and producer in AI semiconductor technologies.

These projections from $28.1 billion in 2026 to $149.8 billion by 2031 vividly illustrate the accelerating role of AI in transforming semiconductor demand and innovation cycles [Chart: US AI Semiconductor Market Growth Projection].

Semiconductor Industry Surpasses $1 Trillion in Revenue in 2026 Amid AI Surge

In 2026, the semiconductor industry achieved a historic milestone, surpassing $1 trillion in global revenue for the first time. This rapid growth, accelerated by AI-driven demand, exceeds previous industry expectations and compresses the timeline for reaching this valuation by several years. The performance leap is chiefly attributed to soaring memory and logic integrated circuit revenues driven by hyperscale data center expansion and AI infrastructure investments.

Memory components such as DRAM and NAND flash experienced unprecedented price increases and revenue surges, reflecting their critical role in supporting AI workloads with high bandwidth and storage requirements. Computing and data storage segments led overall growth, with projected year-over-year increases exceeding 40%, emphasizing the vital importance of semiconductors in underpinning AI capabilities across enterprise IT and consumer-facing applications.

AI Workloads as the Primary Demand Engine Reshaping Chip Architectures

The rapid adoption of AI workloads is a fundamental driver reshaping semiconductor demand profiles and technology design imperatives. Large language models, generative AI, and autonomous systems generate computational patterns that differ significantly from traditional workloads, necessitating chips optimized for massive parallelism, matrix multiplications, and sparse data operations.

This dynamic elevates demand for specialized chip families, including graphics processing units, tensor processing units, and custom ASICs, as well as advanced memory solutions like high-bandwidth memory (HBM). These components enable hyperscale data centers and edge devices to efficiently support AI training, inference, and real-time analytics, thereby redefining the semiconductor product and investment landscape. The ongoing capital reallocation within enterprise and hyperscaler infrastructure further accelerates growth in AI-optimized chip segments.

Having established the profound macroeconomic and technological drivers behind the semiconductor industry’s AI-led revenue surge, the following subsection will analyze how these factors have translated into a synchronized market rally among semiconductor equities, particularly highlighting AMD's emergence within the evolving competitive landscape.

Chipstock Rally Reflects Structural Shift Toward AI Infrastructure Investment

This subsection analyzes how recent market movements in semiconductor equities, particularly those of AMD, illustrate a fundamental transition in investor sentiment driven by the growing AI infrastructure investment wave. By quantifying index-level surges and tracing the causal linkages between industry-wide earnings reports and stock price reactions, it clarifies the market’s revaluation of AI-enabled semiconductor firms as core beneficiaries of the technology-driven expansion.

Quantifying the PHLX Semiconductor Index Surge and Its Impact on AMD Stock Performance

The Philadelphia Semiconductor Index experienced a robust rally, advancing approximately 3% in a critical trading session marked by heightened AI optimism. This uptrend was led principally by major players including Broadcom, AMD, and Nvidia, which combined to drive not only absolute gains but also market sentiment across the sector. Specifically, AMD’s shares surged around 4.5% in one notable day, situating it among the best-performing equities and reinforcing its perception as a key AI beneficiary.

This rally is embedded in a broader 2026 trend where the PHLX index recorded gains exceeding 40% year-to-date at various points, reflecting sustained institutional and retail investor appetite for semiconductor stocks. AMD's stock trajectory, highlighted by a multi-month advance surpassing 70% before its Q1 earnings release, demonstrates the direct correlation between AI-driven growth expectations and equity appreciation across companies positioned to capitalize on AI infrastructure demand.

Decoding TSMC’s Earnings as a Catalyst for AMD and Sector-Wide Upside

TSMC’s financial results served as an immediate catalyst, validating the AI investment narrative across semiconductor supply chains. Strong wafer fabrication demand tied to AI workloads, as reported by TSMC, injected confidence into the market, with ripple effects lifting AMD’s shares alongside industry peers. The positive supplier outlook signaled robust upstream chip production capacity utilization and sustained momentum in next-generation AI chip demand, a critical input factor for AMD’s accelerated product roadmap.

This cascading effect underscores an investor recognition that AI growth is not limited to the leading chip designers alone but extends through fabrication, packaging, and materials providers. The interdependence strengthens AMD’s market outlook by anchoring its product competitiveness within a reliable, high-volume manufacturing ecosystem, inspiring multiple analysts to raise price targets concurrently with TSMC’s upbeat guidance.

Investor Sentiment Correlated with AI Infrastructure Capex Driving Semiconductor Stocks Higher

The rally in chip stocks like AMD closely correlates with rising hyperscaler capital expenditure on AI infrastructure, which has been forecasted to grow sharply, with many of the Big Five hyperscalers poised to increase AI-related investments by more than 70% year-over-year. This surge in AI hardware capex propels demand for CPUs, GPUs, and specialized AI accelerators, positioning semiconductor producers at the core of the AI economic expansion.

Market commentary consistently points to a structural shift, where AI infrastructure spending is not episodic but represents a durable investment cycle supporting semiconductor revenue growth and valuation multiples. Investors are thus increasingly confident that AI-driven compute demand forms a strategic tailwind transcending traditional semiconductor cyclical patterns, emboldening bullish sentiment and encouraging broader sector participation beyond dominant players to include emerging AI chip leaders like AMD.

Having established the concrete market evidence of AI infrastructure investment fueling a semiconductor rally and the pivotal role of industry-wide earnings and capex trends, the report proceeds to an in-depth analysis of AMD’s strategic differentiation and internal capabilities that enable it to leverage these market tailwinds.

2. AMD's Dual-Frontier Strategy: From Gaming Legacy to AI Infrastructure Powerhouse

Technological Differentiation Anchored in Zen 5 CPUs and RDNA 4 GPUs Fuels AMD’s AI Competitiveness

This subsection elucidates the foundational technological innovations underlying AMD's dual-frontier strategy, highlighting how architectural advancements in CPUs and GPUs enable AMD to benchmark competitively against incumbents while capturing AI-centric workloads. By dissecting CPU performance metrics, GPU adoption trends, and competitive positioning of flagship products, the analysis quantifies AMD’s capability to serve growing AI infrastructure demands while maintaining efficiency and cost advantages.

Zen 5 Architecture Elevates AI Compute Through Enhanced Ryzen AI Integration

The Zen 5 microarchitecture introduced in early 2025 marks a significant leap over its Zen 4 predecessor, reflecting AMD's strategic emphasis on AI-accelerated computing across client and data center segments. Benchmarks for Q1 2026 reveal that Ryzen AI CPUs, integrating dedicated AI accelerators within the Zen 5 architecture, achieve performance uplifts of approximately 25-30% on AI inference workloads compared to earlier Ryzen 9 7950X models. This is driven by expanded vector processing units, improved cache hierarchies, and instruction set extensions optimized for matrix operations critical in LLM-serving and real-time agentic AI tasks.

Moreover, Zen 5’s architectural refinements have reduced inference latency and increased throughput through improved memory bandwidth and branch prediction efficiencies, supporting workloads ranging from edge deployments to hyperscale servers. The balance between power efficiency and raw processing power positions AMD's Ryzen AI series as competitive alternatives for enterprises seeking scalable AI inference CPUs that can manage diverse real-time applications without heavy reliance on GPU acceleration.

RDNA 4 GPU Architecture Drives Broad Adoption in Generative AI and Gaming Workloads

AMD’s RDNA 4 GPUs, launched between late 2024 and early 2025, have gained rapid adoption in both gaming and AI inference markets, leveraging architectural enhancements that optimize parallelism and specialized AI cores. Market data through 2025 indicate that RDNA 4-powered products, such as the Radeon RX 9070 XT, not only set new sales records in enthusiast segments but also support efficient execution of generative AI workloads, including real-time rendering and AI-assisted content creation.

Key architectural improvements include enhanced ray tracing through dedicated hardware units and AI accelerators embedded within the GPU pipeline, facilitating improved frame generation and upscaling via machine learning techniques. The inclusion of AMD’s FSR "Redstone" machine learning suite further distinguishes RDNA 4 in delivering visually immersive experiences while maintaining lower power consumption relative to comparable NVIDIA offerings. These innovations reflect AMD’s capability to address both consumer-grade AI workloads and specialized inference tasks with a cost-effective GPU lineup.

Instinct MI300X and MI400 Series Narrow Gap with NVIDIA’s H100/H200 Through Memory and FP Performance

AMD’s data center GPU offerings, notably the MI300X and evolving MI400 series, are pivotal in challenging NVIDIA's dominance in AI infrastructure, providing compelling price-performance ratios that appeal to hyperscalers and research institutions. The MI300X boasts 192GB of HBM3 memory—over double the capacity of NVIDIA’s 80GB H100—which significantly benefits large-scale transformer models and extensive AI training datasets by reducing memory bottlenecks in distributed training architectures.

Performance benchmarks demonstrate that the MI300X delivers up to 163.4 teraflops on FP64 matrix operations, roughly 2.4 times higher than NVIDIA’s H100, indicating strong double-precision capabilities essential in scientific computing and machine learning. Moreover, MI300X achieves a 60 percent higher memory bandwidth than the H100, enhancing throughput for data-intensive AI workflows. However, the H200 narrows this advantage slightly with improved memory bandwidth, but MI300X’s open software ecosystem—anchored by the ROCm platform—and competitive power envelope provide important differentiators. These factors have resulted in increased design wins at major cloud providers, validating AMD's competitive positioning amidst NVIDIA’s entrenched CUDA ecosystem.

Together, the Zen 5 processors and RDNA 4 GPUs establish a robust technological foundation that strengthens AMD's AI infrastructure credentials. However, hardware innovation alone is insufficient without ecosystem support and strategic partnerships, which are addressed in the next subsection to underscore AMD's holistic approach to cementing its role as an AI infrastructure powerhouse.

Forging Powerful Alliances and Open Ecosystems: AMD’s Multi-Gigawatt AI Software and Hardware Collaborations

This subsection elucidates AMD’s strategic approach to building a robust AI hardware and software ecosystem through high-profile partnerships, expansive deployment agreements, and an evolving open-source software platform. It details how AMD leverages these alliances and software innovations to penetrate hyperscaler AI infrastructure, accelerate developer adoption, and underpin enterprise-level LLM deployments—thereby solidifying AMD’s foothold in the fiercely competitive AI chip market.

Meta’s Multi-Gigawatt AI Infrastructure Deal: Timeline, Scale, and Strategic Impact

AMD’s collaboration with Meta represents one of the semiconductor industry’s largest AI infrastructure commitments. In early 2026, the two companies formalized a multiyear agreement wherein AMD will supply up to six gigawatts of its custom Instinct GPUs to power Meta’s next-generation AI data centers globally. The initial rollout uses a bespoke version of AMD’s MI450 GPU architecture and is slated to commence shipments in the second half of 2026. This phased deployment reflects an alignment of silicon, system, and software roadmaps—delivering AI platforms purpose-built for Meta’s diverse workload demands at hyperscale.

The deal significantly advances Meta’s strategic push to reduce its historical dependency on rival chipmakers by building a multi-supplier AI hardware base. Notably, AMD granted Meta performance-based warrants for approximately 10% ownership in AMD, underscoring a deep, long-term commercial relationship that extends beyond a vendor-client model. The enormous power capacity under contract indicates Meta’s aggressive AI compute expansion as foundational to its multi-billion-dollar AI investment strategy, spanning from AI model training to inference at mass scale.

AMD ROCm Platform Penetration and Developer Adoption Metrics in 2025

AMD’s ROCm software platform functions as the linchpin enabling hardware-software co-optimization for AI workloads. In 2025, ROCm underwent major enhancements targeting generative AI and large language model (LLM) acceleration, including improved performance optimizations, expanded framework support (e.g., PyTorch and TensorFlow), and streamlined developer workflows. These advancements significantly bridged the gap with incumbent solutions and addressed previous developer experience challenges through focused investments in usability and tooling.

Corporate adoption metrics reveal that ROCm has gained meaningful traction across hyperscalers and enterprises. Azure and Oracle Cloud Infrastructure incorporated ROCm-optimized MI300-series accelerators to support production-grade AI workloads such as GPT-3.5 and Llama 3.1, validating the platform’s readiness for large-scale AI training and inference. Moreover, ROCm’s open-source architecture offers a critical competitive advantage, freeing ecosystem participants from dependency on proprietary CUDA environments and enabling greater architecture flexibility and cost optimization in multi-vendor AI deployments.

Commercial Scale Deployments of AMD-Powered Enterprise LLMs via Lamini and MosaicML

AMD has strategically integrated with leading AI software vendors to amplify its penetration into enterprise LLM markets. Through partnerships with Lamini and MosaicML, AMD-powered GPUs are enabling sophisticated model tuning and training with minimal code adaptation, providing customers with cost-effective and scalable AI solutions. For instance, Lamini’s enterprise LLM deployments leverage AMD hardware to optimize factual accuracy and inference speed, drastically reducing time-to-value for business-critical AI applications.

These collaborations underscore the maturity of AMD’s full-stack AI offering, combining hardware acceleration with software frameworks specialized for demanding inference and training workloads. MosaicML, renowned for its efficient, compute-agnostic training orchestration, exploits ROCm compatibility to facilitate near-linear scaling across AMD GPU clusters. Together, these partnerships illustrate AMD’s ability to support complex, multi-node LLM training at scale—effectively competing with incumbents by lowering barriers to enterprise adoption and accelerating innovation cycles in production environments.

With AMD’s strategic partnerships and open ecosystem foundation firmly established, the report next examines how these collaborations translate into competitive dynamics, revealing opportunities and threats in the AI accelerator marketplace as AMD challenges entrenched incumbents.

Financial Resilience and Margin Expansion Powering AMD’s Data Center Leadership

This subsection provides a granular financial dissection of AMD’s data center business, illustrating how its robust revenue contributions and expanding margins underpin the company’s transformation into a dominant AI infrastructure player. By analyzing recent quarterly results, margin trends, and growth forecasts, we reveal the financial mechanics driving AMD’s strategic momentum and assess the sustainability of this trajectory.

Breakdown of AMD’s Q1 2026 Revenue Reflecting Data Center Dominance

AMD reported outstanding first quarter 2026 revenue of approximately $10.25 billion, exceeding analysts' consensus by more than 4%. Over half of this revenue—approximately $5.78 billion—originated from the data center segment, evidencing its emergence as AMD’s primary growth engine. This segment’s performance surpassed street estimates and accounted for the bulk of AMD’s year-over-year revenue increase, underscoring the company’s successful pivot from a consumer-centric to an enterprise-driven revenue model. Other segments such as client and embedded showed moderate improvement, but the data center business clearly led the financial charge.

The data center segment’s outsized contribution is supported by increased demand for EPYC server CPUs and Instinct GPUs, fueled by hyperscaler investments and AI infrastructure projects. This shift is accentuated by major AI customers committing multiple gigawatts of AMD’s Instinct deployments, anchoring a $23 billion annualized revenue run rate that validates AMD’s growing relevance in large-scale AI compute environments.

Margin Trends Indicate Operational Efficiency and Profitability Gains

AMD’s gross margin profile continues to improve, reflecting both product mix transformation and operational leverage within its data center business. For Q1 2026, non-GAAP gross margin reached approximately 55%, an increase driven largely by the higher-margin data center sales. This was coupled with significant expansion in operating income, which grew more than 80% year-over-year to roughly $1.5 billion, while net income nearly doubled to around $1.4 billion. These margin gains evidence enhanced pricing power, efficient cost management, and scalability as AMD ramps AI-related product shipments.

Sequential margin improvement is expected to persist in the near term as next-generation EPYC processors continue to ramp and as Instinct GPU sales grow alongside expanded cloud and hyperscaler deployments. AMD’s guidance for Q2 2026 anticipates revenue of roughly $11.2 billion, implying roughly 46% year-over-year growth, further supporting sustainability of margin expansion driven by its data center segment.

Outlook and Growth Forecasts Point to Sustained Data Center Momentum

Looking ahead, AMD projects robust growth in its data center segment extending into 2027. The company forecasts server CPU total addressable market growth exceeding 35% annually through 2030, reflecting accelerating adoption of AI-accelerated compute infrastructure. The near-term outlook is notably bullish, with expectations of more than 70% year-over-year growth in server CPU revenue for Q2 2026 alone, signaling strong market penetration and demand resilience.

Such forecasts reinforce AMD’s strategy of establishing itself as a credible second source to dominant providers in AI compute. Continued expansion of design wins, hyperscaler commitments, and strategic partnerships are key drivers supporting a sustained revenue run rate projected to grow well beyond $23 billion over the coming years, underpinning both top-line growth and margin leverage.

With AMD’s financial foundation in the data center now firmly established, the subsequent discussion will explore how these fiscal strengths translate into competitive dynamics within the broader AI chip market, highlighting both opportunities and risks ahead.

3. Competitive Dynamics and Market Share Trajectory in the AI Chip Arena

Navigating the AI Accelerator Landscape: AMD’s Market Position Amidst NVIDIA Dominance and Intel’s Challenges

This subsection critically examines the competitive dynamics shaping the AI accelerator landscape, focusing on AMD’s positioning relative to dominant players and emerging challengers. By updating market share figures, assessing AMD’s gains in the high-stakes data center GPU segment, and evaluating Intel’s competitive threat, this analysis clarifies AMD’s strategic foothold and its prospects within a rapidly evolving market.

Nvidia’s Overwhelming AI Wafer Market Share and AMD’s Niche Growth

Nvidia maintains a commanding position in the AI processor wafer market, projected to consume approximately 77% of global AI wafer shipments by 2025. This substantial market share reflects Nvidia’s ability to secure wafer allocations exceeding half a million 300mm units, primarily in support of its cutting-edge Hopper and Blackwell GPU architectures. The scale and pace of Nvidia’s production ramp underscore its entrenched leadership across hyperscaler data centers and AI infrastructure deployments.

In contrast, AMD's share of AI wafer consumption is expected to contract from about 9% to approximately 3% in 2025 despite ongoing shipment growth. This decline in relative share does not signify a drop in absolute volume; rather, Nvidia’s faster expansion outpaces AMD’s growth trajectory in wafer allocations. AMD’s AI accelerator family, including the MI300, MI325, and MI355 GPUs, commands wafer orders ranging between 5,000 and 25,000 units, signaling an incremental yet meaningful presence centered on select hyperscaler partnerships and targeted workloads.

While Nvidia’s dominance remains clear, AMD’s maintained foothold is significant. It enables the company to establish itself as a credible alternative supplier for AI acceleration, vital for customers seeking supplier diversification amid supply chain constraints and geopolitical uncertainties.

Expanding AI GPU Data Center Presence: AMD’s Gains in Hyperscaler Design Wins

AMD’s progress in the AI GPU data center market reflects a growing portfolio of design wins with leading hyperscalers such as Meta, Oracle, and Microsoft. These strategic partnerships have translated into incremental but important market share gains. Industry estimates place AMD’s data center GPU market share in the lower single digits, approximately 5–7% as of early 2026, up from historic lows but still dwarfed by Nvidia’s commanding 80–86% share.

Crucially, AMD’s architectural advancements with the MI300 and upcoming MI400 series have enabled it to compete on performance per watt and total cost of ownership metrics, attributes increasingly valued by hyperscalers managing extensive AI workloads. These design wins are not limited to generic GPU replacements but extend into specialized AI inference and training clusters where cost efficiency and heterogeneous compute models are prioritized.

Despite AMD’s smaller scale, these wins affirm its growing credibility and influence within AI infrastructure, offering customers viable alternatives to Nvidia’s ecosystem-dependent offerings—especially relevant in the context of hyperscalers' efforts to mitigate supply risk and reduce single-vendor exposure.

Intel’s Raptor Lake Refresh and AI Accelerator Challenges: Assessing Competitive Threats

Intel’s position in the AI accelerator market remains challenged despite efforts to refresh its product lineup. The 2024 Raptor Lake series, while providing respectable CPU performance, has encountered stability issues and supply shortages, constraining inventory and limiting market penetration. Additionally, subsequent releases such as Arrow Lake have underperformed relative to both AMD and Nvidia offerings, particularly in AI-specific inferencing and training workloads where GPU acceleration is critical.

Intel’s Gaudi family of AI accelerators aimed to carve out a competitive niche but has suffered from immature software ecosystems and underwhelming adoption rates. The company’s ambitious goal of $500 million AI accelerator sales in 2024 fell short, reflecting broader difficulties in capturing hyperscaler design wins and penetrating entrenched markets.

Looking ahead, Intel is integrating Gaudi AI accelerators with its data center GPU efforts under a unified product strategy, yet its impact in 2025 and beyond remains uncertain. This underperformance contrasts sharply with AMD’s momentum and may constrain Intel’s ability to pose a direct competitive threat to AMD’s growing share in AI GPU deployments.

Having established AMD’s relative market share realities and competitive landscape, the subsequent analysis will delve into the nuanced risk-return profile confronting AMD as it navigates market volatility and attempts to leverage its technological and strategic gains against entrenched giants.

Risk-Reward Profile Under Scrutiny: Bullish Momentum Meets Market Volatility and Valuation Challenges

This subsection critically examines the interplay between AMD’s recent bullish stock performance driven by AI optimism and the persistent risks posed by inventory levels, macroeconomic uncertainties, and sector-wide volatility. By analyzing investor sentiment, analyst consensus, and valuation metrics, it provides a balanced perspective on AMD’s current market positioning and the sustainability of its rally amid evolving semiconductor industry dynamics.

Impact of Inventory Levels and Global Economic Slowdown on AMD Valuation

AMD’s valuation remains sensitive to fluctuations driven by inventory adjustments and broader economic trends. Despite strong revenue growth in data centers and AI-focused segments, concerns about inventory buildup have periodically tempered investor enthusiasm. These concerns stem largely from uncertainties in product demand forecasting and geopolitical constraints, including export limitations impacting certain GPU sales. Elevated inventory risks, coupled with a global economic slowdown, have introduced heightened volatility in AMD's stock price dynamics, reflecting the semiconductor sector's inherent cyclicality.

Moreover, the semiconductor market’s exposure to shifting macroeconomic conditions—such as supply chain disruptions and cautious corporate IT spending—contributes to downward pressure on valuations. This environment necessitates robust supply chain management and clear demand visibility from AMD to maintain investor confidence and sustain market multiples.

Analyst Price Targets and Consensus: Range and Underlying Drivers

Wall Street consensus around AMD exhibits a cautiously optimistic stance, with analyst price targets spanning broadly from approximately $130 up to $250. This range embodies divergent views on AMD’s capacity to accelerate growth, execute its AI infrastructure strategy, and counter competitive pressures from Nvidia and Intel. The median price target generally centers near the mid-$140 to $180 level, reflecting recognition of AMD’s technological advancements and partnership traction alongside risks related to valuation stretch and margin pressures.

Recent analytical upgrades and maintained 'Outperform' ratings underscore confidence in AMD’s transition toward enterprise AI markets and the strength of its product roadmap. Conversely, some reports emphasize caution given elevated price-to-earnings multiples and the likelihood of persistent R&D and marketing expenditures constraining near-term margin expansion. Overall, the consensus narrative frames AMD as a high-growth, high-volatility candidate, appealing primarily to investors with a long-term horizon and a tolerance for sector cyclicality.

Fundamental Drivers Behind AMD’s 235% Stock Rally Timeline

The extraordinary 235% rally registered in 2025 was anchored firmly in fundamental business improvements rather than speculative excess. This surge coincided with critical announcements of strategic partnerships with leading AI platform providers and cloud hyperscalers, validating AMD’s role in next-generation AI infrastructure deployment. Financial results during this period featured record-setting data center revenues and expanding gross margins, cementing market recognition of AMD's repositioning from a consumer PC chipset maker to a serious AI infrastructure competitor.

Notably, the rally unfolded alongside the launch and scaling of AMD's AI-centric products, including the MI300X and Ryzen AI processors, bolstering its competitive stance. Despite regulatory export-related inventory charges, the market’s focus on core operating momentum and contract wins generated sustained investor momentum, reflected in continuous stock appreciation throughout multiple quarters of 2025.

Complementing this bullish trajectory, AMD is projected to nearly quadruple its AI-related revenue from $5 billion in 2024 to $20 billion by 2027, underscoring the significant growth potential of its data center and GPU segments as it scales AI infrastructure deployments [Table: AMD's Projected Revenue Growth].

Contextualizing AMD’s Stock Volatility Relative to the Semiconductor Sector

AMD’s stock exhibits volatility levels exceeding the broader market and semiconductor sector averages, as indicated by a beta nearing 2.0. This elevated price fluctuation arises from its high exposure to AI investment cycles, technology shifts, and competitive battles within a rapidly evolving market. The semiconductor industry’s innate cyclicality, combined with episodic geopolitical tensions and supply chain variability, compounds this effect, driving sharp fluctuations in AMD’s share price.

Compared to sector peers, AMD's stock demonstrates wider trading ranges, with frequent accelerations fueled by product launch news and partnership announcements, and pullbacks linked to market sentiment shifts or macro risks. This volatility profile necessitates risk-adjusted investment strategies, where incremental news flow and earnings surprises substantially influence short-term price performance. Despite this, the sustained upward trend since 2024 reflects durable confidence in AMD’s AI-driven growth narrative amidst episodic corrections.

Having delineated the balanced risk-reward environment surrounding AMD's stock performance, the analysis now progresses to juxtapose AMD’s competitive positioning against dominant and emerging players in the AI semiconductor market, exploring dynamics that will shape market share trajectories and valuation durability.

4. Future Scenarios and Strategic Imperatives for Stakeholders

Navigating Accelerated Growth and Emerging Saturation in AI Semiconductor Markets

This subsection analyzes the long-term growth trajectory of the AI semiconductor sector, focusing on updated compound annual growth rate (CAGR) projections and identifying saturation risk points across key segments. It further explores regulatory pressures and geopolitical dynamics that may temper AMD's growth potential and influence strategic planning. As AMD's AI-driven expansion increasingly shapes market outcomes, understanding these forward-looking indicators is essential for stakeholders to anticipate and mitigate emerging risks while maximizing investment and operational decisions.

Sustained High Growth with Subtle Signs of Post-2026 Deceleration

Recent forecasts indicate that the U.S. AI semiconductor market will expand rapidly from approximately $28 billion in 2026 to nearly $150 billion by 2031, translating to a robust CAGR of nearly 40%. This reflects aggressive deployment of AI-specific chip architectures powering large language models, cloud services, and autonomous platforms. The confluence of substantial investments in fabrication capacity, R&D, and AI workloads underpins this unprecedented growth phase.

However, market intelligence highlights an expected moderation in returns beyond 2026 as early waves of AI adoption approach maturation. AI chip demand drivers such as hyperscale data center expansion and memory pricing stabilization suggest that while absolute revenue growth remains positive, incremental gains will taper in the latter half of the decade. This inflection underscores the necessity for AMD and peers to diversify product offerings and innovate to sustain momentum amid evolving use case maturity and competitive intensification.

Regulatory and Geopolitical Pressures Reshape Investment and Market Access

Heightened regulatory scrutiny targeting semiconductor supply chains, cross-border mergers, and technology transfers poses meaningful challenges to market participants including AMD. Export controls on advanced manufacturing equipment and AI chip technologies, especially those linked to U.S.-China tensions, constrain revenue avenues and complicate operational planning. These controls necessitate strategic realignment favoring secure domestic ecosystems backed by governmental policies and incentives.

Furthermore, the complex landscape of foreign direct investment restrictions and antitrust oversight is increasing uncertainty surrounding international collaborations and acquisitions. Semiconductor companies must navigate evolving frameworks that emphasize national security, industrial policy, and ESG considerations. These dynamics influence AMD’s strategic partnerships and supply chain configurations, impelling heightened focus on supply resilience and compliance as integral components of long-term growth strategies.

Segment Saturation Points and Market Intervention Imperatives

Segment analysis reveals variable saturation rates within the AI semiconductor market. Data centers and cloud infrastructure chip demand, which currently constitute the bulk of AI hardware consumption, are projected to experience earlier plateauing due to capital expenditure cycles and macroeconomic constraints. Conversely, emerging segments such as edge AI computing and inference chips exhibit prolonged growth windows, driven by increasing adoption in IoT devices, autonomous systems, and industrial applications.

This uneven saturation landscape implies that AMD must prioritize agility in product portfolio management—intensifying investment in low-latency, power-efficient edge solutions, while actively refining data center offerings to maintain relevance and competitiveness. Stakeholders should anticipate targeted interventions including accelerated innovation, flexible manufacturing paradigms, and diversification to extend growth cycles and mitigate the risks inherent in segment maturity.

Notably, AMD’s Q1 2026 revenue breakdown underscores the data center segment as the primary growth engine, contributing $5.78 billion compared to $2.5 billion from the client segment and $2.0 billion from embedded solutions. This significant disparity highlights the strategic imperative for AMD to sustain and evolve its data center offerings amid impending market saturation, while also expanding investment into emerging edge and embedded sectors [Chart: AMD’s Q1 2026 Revenue Breakdown].

The subsequent subsection will examine complementary growth avenues, including edge computing adoption and sustainability initiatives, framing additional levers AMD and industry players can exploit to counterbalance market saturation and regulatory complexities.

Unlocking Growth Horizons: Edge AI Advancements and Sustainability Frontiers in Semiconductor Manufacturing

This subsection explores emerging vectors in semiconductor growth beyond centralized AI data centers, focusing on the edge computing hardware landscape and sustainable manufacturing. It elaborates on the critical role edge AI SoCs play in expanding AI’s reach to latency-sensitive, power-constrained environments and examines how advanced packaging and environmental compliance are steering industry competitiveness. Together, these factors frame a forward-looking vision where performance, energy efficiency, and regulatory alignment become intertwined imperatives for stakeholders.

Explosive Expansion of Edge AI SoCs: Market Dynamics and Strategic Implications

The edge AI semiconductor segment is undergoing rapid growth driven by deployment demands in autonomous vehicles, healthcare, industrial automation, and consumer electronics where real-time intelligence and privacy preservation are paramount. Market data indicate that shipment volumes of smart vision processing chips—a key category of edge AI SoCs—are projected to surge from approximately 365 million units in 2025 to over 1.1 billion by 2030, reflecting sustained double-digit annual growth rates. This expansion is fueled by continuous hardware cost reductions, increased AI capabilities integrated directly on devices, and growing enterprise interest in distributed analytics architectures that reduce dependency on cloud infrastructure.

Financially, the global edge AI hardware market was valued near $12 billion in 2025, with forecasts suggesting nearly quadruple expansion to about $48.5 billion by 2034, corresponding to a robust CAGR around 19.5%–20%. This growth trajectory outpaces many segments of the broader semiconductor industry, highlighting edge AI as a distinct and strategic growth frontier. The proliferation of generative AI use cases at the device level further accelerates the need for powerful but energy-efficient neural processing units (NPUs) and accelerators, which AMD and peers can target by extending their AI-centric CPU and GPU technology stacks into edge-specific system-on-chip designs.

These trends emphasize the semiconductor sector’s pivot toward diversified product portfolios addressing both cloud-centric and edge use cases. For AMD, leveraging technological innovations like Zen 5 and RDNA 4 architectures in compact, low-power form factors will be critical to capturing edge AI market share while expanding beyond traditional high-performance computing domains.

Advanced Packaging Innovations Driving Latency and Energy Efficiency Gains

Advanced semiconductor packaging technologies, including fan-out wafer-level packaging (FO-WLP), panel-level packaging (PLP), and heterogeneous 2.5D/3D integration, have become pivotal enablers of performance scaling while managing power density and thermal constraints. Market valuations for advanced packaging soared from $20.2 billion in 2014 and are forecasted to more than double to over $48 billion by 2024, with estimated annual growth in excess of 12%. This packaging evolution facilitates closer integration of compute cores and memory, reduces interconnect lengths, and supports higher bandwidth densities vital for AI workloads at both edge and data center scales.

Such technologies also contribute directly to energy efficiency by optimizing signal integrity and enabling innovative cooling solutions, thereby extending chip longevity and lowering operational expenses. For companies like AMD, embracing advanced packaging is not just a manufacturing upgrade but a strategic lever to differentiate AI accelerators via heterogeneous system-in-packages (SiP) that combine CPUs, GPUs, memory, and AI accelerators within a compact footprint optimized for power and performance. These packaging advancements help mitigate design trade-offs inherent in scaling sub-3nm nodes, simultaneously addressing thermal and latency bottlenecks critical to sustaining AI compute density gains.

Looking forward, the growing deployment of co-packaged optics (CPO) further represents an emerging frontier in packaging innovation. By integrating optical transceivers directly alongside switching ASICs or AI processors, CPO reduces energy consumption by 25-30% and latency by up to 15%, representing a transformational leap in interconnect efficiency within hyperscale data centers and AI clusters. This technology is swiftly gaining traction, with market estimates projecting a rise from $3.5 billion in 2024 to nearly $16 billion by 2033. For AMD, participation in or alignment with CPO ecosystem initiatives and suppliers could fortify its competitive positioning amid intensifying demands for AI data throughput and sustainability targets.

Regulatory and Sustainability Frameworks Shaping Semiconductor Competitiveness in EU and APAC

Environmental regulations and sustainability imperatives have emerged as critical factors influencing semiconductor manufacturing strategies globally. In the European Union, ambitious climate legislation aims for climate neutrality by 2050 with intermediate targets including a 55% reduction in greenhouse gas emissions by 2030. Such policies directly impact semiconductor fabs through restrictions on materials, energy consumption standards, and waste management requirements. Compliance with regulations like the EU Chips Act and upcoming updates to F-Gas standards compels semiconductor companies to adopt circular economy principles and develop cleaner manufacturing processes.

Sustainability initiatives incorporate energy-efficient equipment, non-toxic chemical usage, and resource conservation efforts, with growing industry commitment to reduce water usage and emissions. Leading European efforts include the GENESIS project, a multi-stakeholder partnership targeting innovative pathways for low-impact chip manufacturing. These approaches not only enhance environmental stewardship but also contribute to supply chain resilience and access to premium markets with green procurement policies.

APAC markets complement these regulatory trends by intensely focusing on sustainable semiconductor ecosystem development as well. Investments in AI-powered sustainability solutions have facilitated a 39% adoption rate of AI to optimize energy use and material efficiency among companies, notably within China, Taiwan, South Korea, and Southeast Asia. Regional frameworks such as the ASEAN Integrated Semiconductor Supply Chain seek to balance growth with adherence to emerging environmental standards. Moreover, APAC semiconductor hubs actively integrate circularity and energy management into advanced packaging and fabrication workflows, further lowering carbon footprints while maintaining cost competitiveness.

For AMD and other multinational players, navigating this increasingly complex geopolitical and regulatory environment demands proactive engagement with policymakers and collaborative innovation in sustainability technologies. Aligning product development, manufacturing, and supply chain strategies with these frameworks is essential to safeguarding long-term operational viability and investor confidence.

Having outlined the promising growth opportunities in edge AI hardware and advanced packaging alongside the regulatory and sustainability challenges shaping the semiconductor manufacturing landscape, the discussion now transitions to evaluating AMD's competitive positioning and risk profile amidst these broader industry currents.

5. Conclusion: Charting a Course for AI-First Semiconductor Leadership

Decoding AMD's AI Revenue Surge, Competitive Pressures, Supply Risks, and Policy Impacts: Strategic Imperatives for Leadership

This subsection consolidates key insights regarding AMD’s financial momentum in AI-driven segments from 2025 through early 2026, its competitive positioning against entrenched ecosystem leaders, vulnerability to supply chain disruptions, and the evolving export control landscape. Together, these facets delineate the operational context and strategic imperatives for AMD and its stakeholders navigating a complex, geopolitically charged semiconductor environment.

Quantifying AMD’s AI Revenue Expansion Amid a Shifting Semiconductor Terrain

AMD has exhibited remarkable financial acceleration in its AI-related segments during 2025 and into early 2026, driven primarily by its expanding presence in data center GPU deployments and AI-accelerated CPUs. From a financial standpoint, its revenue advanced from approximately $7.4 billion in Q1 2025 to surpassing $10 billion in Q1 2026, marking a compound growth rate well above industry averages for semiconductor peers. Notably, data center revenues, heavily correlated with AI infrastructure adoption, have matured into a $23 billion annual run-rate business, illustrating the strategic pivot away from AMD’s traditional gaming-centric revenue base.

This surge is underpinned by competitive product launches such as the Zen 5 Ryzen AI series and RDNA 4 GPU architectures, with particular strength in AMD’s MI300X and MI400 AI accelerator families. Analysts forecast AI-specific revenues could reach 'tens of billions' by 2027, reflecting a tripling from roughly $5 billion data center GPU revenues observed at the end of 2024. This level of growth is an inflection point signaling AMD’s transition to a formidable AI infrastructure contender, fueling investor confidence and enabling it to capitalize on accelerating AI workloads.

Navigating the Entrenched Competitive Moat: AMD Against NVIDIA’s Ecosystem Lock-In

Despite rapid revenue growth, AMD faces persistent competitive headwinds due largely to NVIDIA’s dominant AI chip ecosystem. NVIDIA currently holds upwards of 77% of AI wafer consumption and over 90% discrete GPU market share, cemented not only by hardware performance but critically by its extensive CUDA software ecosystem and deeper hyperscaler integrations.

NVIDIA's tightly integrated hardware-software platform creates significant lock-in advantages, fostering high switching costs for customers and accelerating recurring cloud service revenues. In contrast, AMD’s open-source ROCm software offers greater flexibility but lacks comparable ecosystem maturity, requiring sustained investment to close the gap.

This entrenched competitive moat compels AMD to aggressively price new AI accelerators below NVIDIA analogues, as exemplified by its MI355 GPU being positioned around 30% cheaper than competitor models. Although AMD’s market share in AI GPUs remains near 3% now, recent hyperscaler design wins highlight incremental gains in approach and perception. The strategic challenge for AMD lies in sustaining innovation momentum and ecosystem development to incrementally erode NVIDIA’s advantage without compromising margins.

Assessing Supply Chain Disruptions and Vulnerabilities Impacting AMD’s Operational Resilience

AMD’s expanding AI business operates within a semiconductor supply chain environment marked by heightened geopolitical risks and complex disruptions, notably affecting critical raw materials and advanced fabrication capabilities. Ongoing global tensions, particularly between the U.S. and China, expose AMD to potential bottlenecks in sourcing components and manufacturing capacity.

The semiconductor sector continues to grapple with supply volatility driven by climate-related risks, trade restrictions, and logistical disruptions centered in geopolitically sensitive regions such as East Asia and Eastern Europe. These factors contribute to periodic inventory imbalances and increased lead times, risking production continuity.

To maintain operational stability, AMD and its ecosystem partners are compelled to invest in supply chain resilience through diversified supplier bases, strategic stockpiling of critical materials, and enhanced transparency via AI-driven risk monitoring platforms. Such proactive risk mitigation is crucial given the rapid scaling requirements of AI compute deployments and the sector's limited tolerance for interruption.

Navigating Export Controls and Policy Shifts: Implications for AMD’s Global Market Access

The semiconductor export control landscape has evolved rapidly due to national security concerns, especially relating to advanced AI chips and related manufacturing equipment destined for China and other restricted markets. Recent policy adjustments include more granular, case-by-case licensing for export of next-generation chips such as NVIDIA’s H200 and AMD’s MI325X, contingent on stringent compliance and third-party testing requirements.

While these regulatory relaxations signal a partial recalibration aimed at balancing geopolitical rivalries with commercial realities, the overall trajectory remains towards tighter controls on both hardware and associated tools. Such uncertainties introduce complexity into AMD’s market planning, particularly for sovereign AI deployments and collaboration with Chinese entities.

Concurrently, the shifting export policies necessitate that AMD enhance its compliance infrastructure and strategically navigate potential tariff impacts, dual-use regulations, and evolving supply chain provenance rules. Anticipating regulatory developments and adapting flexible export strategies will be essential to preserving AMD’s growth runway and access to critical international AI markets.

Charting AMD’s Innovation Roadmap to Sustain AI Leadership and Market Share Gains

AMD’s path to fortified AI competitiveness hinges on continued architectural innovation and ecosystem expansion. Strategic milestones include commercial ramp of the MI400 series GPUs, extending AMD’s high-performance compute portfolio and closing the performance gap with incumbent leaders.

Investment in the ROCm™ software stack remains critical to improving developer adoption rates and enhancing compatibility with dominant AI frameworks such as PyTorch and TensorFlow. Additionally, AMD’s collaboration with industry giants like Meta and OpenAI furthers validation and integration of its solutions within next-generation AI infrastructures.

From a financial perspective, the company targets operating margin expansion through improved silicon yields, chiplet design efficiencies, and scalable production processes enabled by partnerships with leading foundries. Moreover, AMD’s CEO has publicly forecasted annual server CPU market growth exceeding 35% through 2030, underlining AI-driven demand as a core growth driver. These coordinated efforts collectively underpin AMD’s strategy to elevate its AI semiconductor standing and deliver shareholder value amid a fiercely competitive landscape.

Having established AMD’s robust AI revenue growth, competitive challenges, operational risks, and regulatory context, the subsequent report section will evaluate broader competitive dynamics and market share trajectories across the AI chip ecosystem, providing clarity on AMD’s relative positioning and potential inflection points.

Conclusion

In sum, AMD’s trajectory exemplifies a successful strategic pivot leveraging technological differentiation, robust financial execution, and critical industry alliances to capture compelling growth opportunities within the AI-driven semiconductor market. The company’s Zen 5 and RDNA 4 architectures have materially enhanced its competitiveness, complemented by high-profile customer engagements such as Meta’s multi-gigawatt Instinct GPU deployment, underscoring AMD’s potential as a credible alternative to entrenched incumbents.

Nonetheless, AMD confronts significant challenges, including Nvidia’s entrenched ecosystem lock-in, competitive pressures from Intel, and supply chain vulnerabilities exacerbated by geopolitical uncertainties and export controls. These factors contribute to heightened stock volatility and valuation scrutiny, suggesting that sustained innovation and ecosystem expansion remain imperative for AMD to consolidate and grow its market share effectively.

Looking ahead, the semiconductor industry’s outlook hinges on navigating maturation and potential saturation in key AI segments, while exploiting emerging frontiers such as edge AI SoCs and sustainable manufacturing innovations. AMD’s continued investment in software like the ROCm platform, advanced packaging technologies, and strategic collaborations will be vital to unlocking these growth horizons.

Ultimately, AMD’s evolution mirrors the broader semiconductor sector’s transition into an AI-first paradigm. By aligning product roadmaps, operational agility, and regulatory compliance, AMD is positioned to contribute meaningfully to shaping the future of AI infrastructure, delivering shareholder value amid transformative technological and market forces.

References

- GPU Giants: Nvidia, AMD, and Intel

- US Artificial Intelligence (AI) in Semiconductor Market - Strategic Insights and Forecasts (2026-2031)

- US Stock Market Today: S&P 500 and NASDAQ Rise on Intel Surge and Iran Talks Hopes

- AMD Stock: AI Boom or Bust? Analyzing the Rollercoaster Ride

- AMD's Growth Amidst Market Volatility

- AMD's Growth and Competitive Strategy in the AI and Semiconductor Market

- Nvidia's Stock Rallies As Semiconductor Sector Shines

- Chip Stocks Soar: Broadcom and AMD Spark AI-Driven Semiconductor Rally! | AI News

- AI Chips Update - AI Demand Fuels Growth in Advanced Computing Solutions

- Amtech, MACOM, and IPG Photonics Shares Plummet, What You Need To Know

- Top 10 Artificial Intelligence Stocks for May 2025 and Beyond – Gov Capital Investor Blog

- AMD (AMD) Shares Skyrocket, What You Need To Know

- Thematic ETF report Q4 2025 - Asset Management

- AMD Stock Analysis: Anatomy of 235% AI-Driven Rally

- Will AMD’s AI Bet Pay Off? Wall Street Weighs In on New Horizons - DSA

- AMD's Stock Skyrockets! Is AI the Game Changer?

- AMD's SWOT analysis: stock poised for growth amid AI boom, competitive challenges By Investing.com

- Evaluating AI Stock Market Performance: Intel, Nvidia, and AMD

- AI stocks rally pushes S&P 500, Nasdaq, Kospi to record highs

- AI drives semiconductor revenues past $1 trillion for the first time in 2026

- AMD vs NVDA - Comparison tool | Tickeron

- Semiconductor Sector Overview: Inside the 18-Day Win Streak Reshaping AI's Silicon Landscape

- Advanced Micro Devices Inc. (AMD) to Deepen Collaboration with France to Support AI Strategy

- Advanced Micro Devices, Inc.

- PDF 46357_010_BMK_WEB - Advanced Micro Devices, Inc. (AMD)

- MLQ.ai | AI for investors

- AMD Delivers Leadership Portfolio of Data Center AI Solutions ...

- Techmeme: Kaspersky says Daemon Tools, a widely used app for mounting disk images, has been backdoored in a monthlong compromise that has pushed malicious updates (Dan Goodin/Ars Technica)

- Techmeme: Samsung reaches a $1T valuation, making it only the second Asian company after TSMC to hit the mark, after its stock rose 4x+ over the past year on the AI boom (Sangmi Cha/Bloomberg)

- PDF Global Semiconductor Industry Outlook 2026: AI, Edge Computing, and Sustainability to ...

- Missed Advanced Micro Devices' Big Move? Here's Why It's Still a Buy | The Motley Fool

- Best AI Stocks to Buy in 2025 - Intellectia AI™

- Missed Nvidia? AMD Could Be Your Second Chance to Earn Massive AI Gains

- AI Drives Semiconductor Market in 2025-2026 – SC-IQ: Semiconductor Intelligence

- Market Volatility Intensifies Around Palantir Amid AI Competition Concerns

- Palantir Rides High on Defense Spending Surge

- AI Competition Poses Risks to the Market: Tips for Staying Safe.

- 1 Artificial Intelligence (AI) Stock to Buy Before It Jumps 37%, According to a Wall Street Analyst - The Globe and Mail

- AMD's AI Roadmap: A Multi-Billion-Dollar Revolution

- AMD Earnings: Strong Earnings but Weak AI GPU Forecast

- UNIVERSITA' DEGLI STUDI DI PADOVA

- AI Demand Is Surging — But Taiwan Semiconductor Is Controlling the Supply - 24/7 Wall St.

- Nvidia's AI Chip Dominance Challenged by Alphabet's Custom TPUs and Axion Processors - News and Statistics - IndexBox

- Who Will Divide Up the CoWoS Production Capacity in 2026?

- Artificial Intelligence, Complementary Assets and M&As: the NVIDIA Case

- Nvidia Will Dominate 77% of AI Processor Wafer Consumption by 2025 | Cloud News

- Nvidia to consume 77% of wafers used for AI processors in 2025: Report | Tom's Hardware

- 2 Artificial Intelligence (AI) Stocks That Could Go Parabolic | The Motley Fool

- Does AMD's blowout quarter underpin the hyper-scaler thesis?

- SK Hynix Inc. (GDRs) (HY9H.SG) Q1 FY2026 earnings call transcript

- PDF Global AI Pulse Q1 2026 - assets.kpmg.com

- Technical Performance | The 2026 AI Index Report | Stanford HAI

- PDF Artificial Intelligence Index Report

- AMD Reports Fourth Quarter and Full Year 2025 Financial Results

- Techmeme: Greek Prime Minister Kyriakos Mitsotakis says Greece will ban social media access for kids under 15 from January 1, 2027, and calls for coordinated EU action (Antonis Pothitos/Reuters)

- Google introduces "notebooks" in the Gemini app for deeper NotebookLM integration and a space to organize chats and files, after adding sources in December 2025 (Abner Li/9to5Google)

- OUTLOOK 2026 Promise and Pressure

- ChatGPT vs Gemini vs Claude – Latest Comparison: Engineering the AI Future in 2026

- PDF Next Generation Next Generation - hc2024.hotchips.org

- US AI in Semiconductor Market: $149.8B by 2031 | Report

- The 2026 AI Infrastructure Spending Surge: Strategic Capital Realignment and Market Transformation

- AI in Semiconductor Market Report [2033]- Size, CAGR, Scope & Forecast

- Aerospace Semiconductor market-Global Growth Analysis 2024-2031

- Strategic Positioning in the AI Semiconductor Revolution: Navigating the $1 Trillion Opportunity by 2030-Flytronics All rights reserved.

- Assessing the AI Landscape: Policy Frameworks, Market Dynamics, and the Agentic AI Revolution

- Trillion-dollar Future for Semiconductors Driven by AI and Automotive Innovations - Businesskorea

- AI Revolutionizes Semiconductor Manufacturing Workflows

- Global AI for Semiconductor Market Key Success Factors 2024-2031

- 1. Benchmarking and Configuration Guide — GPUDirect Storage Benchmarking and Configuration Guide

- 1. Design Guide — GPUDirect Storage Design Guide

- Navigating the AI Chip Market Revolution: Nvidia’s Dominance and Alphabet’s Disruptive Ascendancy in a Fragmented Geopolitical Landscape

- NVIDIA and Global Robotics Leaders Take Physical AI to the Real World

- Best Silicon Photonics Stocks 2026: Top 7 AI Picks

- Latest NVDA News - NVIDIA Launches Ising, the World’s First Op...

- The AI Showdown: Can AMD Challenge Nvidia’s Dominance?

- NVIDIA Unveils Project DIGITS: Personal AI Supercomputer with 1 Petaflop Performance at $3,000

- The Dominance and Challenges of Nvidia in the AI and GPU Markets

- Nvidia stock price – volatility ahead as GTC conference looms?

- Gartner predicts worldwide semiconductor revenue to exceed US$1.3 trillion in 2026 – Intelligent CIO North America

- AI-Driven Semiconductor Surge and Strategic Tech Battles: Navigating the 2026 Market Dynamics and Beyond

- Gartner Forecasts Worldwide Semiconductor Revenue to Exceed $1.3 Trillion in 2026

- Semiconductor Market to Surge Past the Trillion-Dollar Threshold: AI Infrastructure Drives Market Growth

- The Semiconductor Industry's Trillion-Dollar Milestone: Four Years Ahead of Schedule | TechInsights

- Recent News Release

- Chip revenue to reach record $1.3T in 2026, including sensors

- Gartner forecasts worldwide semicon revenue to exceed $1.3 trillion in 2026

- Global Annual Semiconductor Sales Increase 25.6% to $791.7 Billion in 2025 - Semiconductor Industry Association

- North America AI Data Center GPU Market Size, Share by 2033

- AI Data Center Graphics Processing Units (GPUs) Market Report 2026: $32.3 Bn Opportunities, Trends, Competitive Landscape, Strategies, and Forecasts, 2020-2025, 2025-2030F, 2035F

- AMD Is Where Nvidia was 4 Years Ago — This Is Where It Begins To Catch Up

- AMD vs NVIDIA AI GPU Market Share 2026: MI350X vs B200 Competitive Analysis

- Korea's AI Infrastructure Landscape: Navigating Growth, Competition, and Policy

- AI Data Center Value Chain: Every Layer from Chips to Cloud

- Profiles in Innovation Revisited: AI Hardware

- [PDF] Untitled

- PDF Cisco AI Readiness Index 2025 - Realizing the Value of AI

- PDF The semiconductor industry in the AI era - Capgemini

- GPU As A Service Market Size, Share | Industry Report, 2033

- GPU as a Service Market Size, Share | Industry Report [2034]

- CPU and GPU Market| Size & Trend Projection 2026- 2035

- AMD Data Center GPUs Explained: MI250X, MI300X, MI350X and Beyond

- PDF 2024 Annual Report - Advanced Micro Devices, Inc. (AMD)

- AI Chips Market Size & Strategic Opportunities 2032

- AI Inference Market Size, Share | Global Growth Report [2034]

- PDF Understanding GPU Performance Using MLCommons MLPerf Benchmarks

- Impact of Artificial Intelligence on IT Industry Jobs ... - ESP JETA

- AI Automation Market Size & Share | Industry Report, 2033

- Artificial Intelligence Market Size, Share | Industry Forecast to 2035

- Artificial Intelligence (AI) Market Is Going to Boom Rapidly | Google, IBM, Microsoft, Amazon

- PDF Readiness for AI adoption of Philippine business and industry: The ...

- AI Market 2026: The Complete Industry Analysis with Data, Trends and Forecasts - Fungies.io

- Artificial Intelligence (AI) Market Projected to Exceed $3.68 Trillion by 2034

- From USD 69.44 Billion to USD 1,248.60 Billion by 2032 AI Infrastructure Market Enters Hyper-Growth Phase Globally

- World Bank raises India’s growth estimates for FY26, but lowers forecast for FY27

- Super Micro Computer Sees Stock Surge but Faces Persistent Risks

- PDF 2026 Year-Ahead Investment Outlook - am.jpmorgan.com

- PDF Economy - hai.stanford.edu

- 22 Critical Supply Chain Risks to Watch for in 2026 - Z2Data

- Key Supply Chain Risks for 2025 and Proactive Strategies

- A world where everyone can communicate

- Business Impact Analysis for Food Services: Supply Chain Disruptions & Food Safety - Business Impact Analysis Case Study

- The 2025 Risk Survey Report | RapidRatings

- Incorporating Maritime and Geopolitical Risks

- PDF Impact of Geopolitical Risk on the Maritime Supply Chain: A Regional Analysis of the ...

- [PDF] New-generation supply chain - Capgemini

- [PDF] ANDRITZ Non-financial statement 2025

- Supply Chain Risk Management Market Size, Industry Share, Forecast to 2034

- Q1 2026 AI Investment Surge: Financial Performance, Infrastructure Expansion, and Strategic Imperatives Across Tech Ecosystems

- AI Impact Analysis on Chip Industry

- 2025 Artificial Intelligence (AI) Hardware Market Data, Insights, Latest Trends and Growth Forecast to 2034

- Global Semiconductor in Data Centers Market | Infinium Global Research

- AI Compute Shortage Challenges ‘Bubble’ Narrative

- Semiconductor supercycle: the AI chips boom reshaping the global economy (2026)

- Building South Korea’s AI Infrastructure: Data Centers, Compute Capacity, and Innovation Ecosystem

- GPU Market Size & Insights Report [2035]

- Intel stock surges to record highs. These 4 events have powered the company's turnaround.

- Intel's PC Business Survival Hinges on 18A As Shortages Drive AMD & Intel CPU Costs Up By 15%

- INTEL CORPORATION

- Semiconductor- 2025F outlook

- Is Intel stock still a smart investment in 2025?

- Intel transforms the laptop CPU market with Core Ultra 200H/HX - OC3D

- Intel Core Ultra 200 "Arrow Lake-S" NPU to offer 13 TOPS, whole platform up to 37 TOPS - VideoCardz.com

- Intel's Lunar Lake is what the first AI PCs should have been

- August 2025 - Monthly Market Updates

- AMD Radeon Instinct MI300X: Detailed Specifications and Benchmark Ratings - CpuTronic

- AMD Launches Instinct MI300 AI Chips To Challenge Nvidia With Backing From Microsoft, Dell And HPE | CRN

- PDF CONTEXT RESEA - NAND Research

- AMD vs NVIDIA AI Performance: Real-World Analysis 2025

- AMD vs. Nvidia: AI Chip Battle

- The Role and Impact of Advanced Micro Devices (AMD) in the AI Market

- all-press-releases | Bureau of Industry and Security

- Escalation of U.S. Crackdown on Chinese Technology and Telecoms: Emerging Issues

- Semiconductor Tariff Updates: US Policy on China & Korea

- US China Tensions Test Applied Materials Export Risk And AI Ambitions

- The US wants to cut off China’s chip equipment. China says the supply chain will break for everyone.

- U.S.-China Tensions: Regulatory Risk and Strategic Opportunity for Business

- CHIPS, CHINA AND CHOKE POINTS

- SK Hynix Announces $12.9 Billion Investment in Advanced Chip Packaging Facility Amid AI Memory Boom

- US Requires Third-Party Lab Review for Nvidia H200 Chip Exports to China

- U.S. Tech Export Policy Shift and Its Strategic Implications

- Semiconductor Rally Continues- 3 Stocks In The Spotlight Ahead Of Earnings

- Tech stocks today: Semiconductor company earnings in focus amid AI boom, Musk vs. Altman fight continues

- The Tech Download: Chip stocks surge in ‘historic’ month as investors’ AI buildout concerns ease

- Semtech (SMTC) Soars 5.07% on AI-Fueled Semiconductor Surge: Will the Rally Continue?

- The chip sector is on a historic tear, fueled by some unsuspecting stocks

- AMD and Intel Are Leading a Chip Stock Rally Today. Here's Why.

- Broadcom, AMD Lead Chip Stocks Rally Tuesday

- A Surprising Shift in Semiconductor Stocks You Need to Know!

- Wall Street Bullish on Nvidia and AMD as AI Chip Prospects Soar By Quiver Quantitative

- AMD gives upbeat forecast as AI data centre demand surges

- 3 Semiconductor Stocks Beyond NVDA: AMD, AVGO, TSM

- Navigating 2025 AI cloud investment - myqcloud.com

- Analysts See AMD Poised To Capture More AI Data Center Demand

- Buy, Sell, or Hold AMD Stock? Key Tips Ahead of Q3 Earnings — TradingView News

- AMD Artificial Intelligence: 2025 to 2026 Breakout

- Advanced Micro Devices Inc (AMD) Stock Message Board | InvestorsHub

- 2 Extraordinary Artificial Intelligence (AI) Stocks Down 43% and 31% to Buy Before They Turn Around in 2025 | The Motley Fool

- Semiconductors-Increasing-Governmental-and-Regulatory ...

- PDF SILICON SELLOUT - Roslyn Layton

- PDF 2026 Key Issues and Outlook for Korea - yulchonllc.com

- AI and Semiconductor Markets in 2026: Navigating Growth, Challenges, and Global Investment Shifts

- 5 undervalued semiconductor stocks

- PDF U.S.-China Economic and Trade Relations (Year in Review)

- South Korea Radio-frequency (RF) Power Semiconductor Devices Market Strategic Growth and Forecast Trends

- Semiconductor Market | Size, Share, Growth | 2024 - 2030

- South Korea’s AI Investment Outlook: Assessing Growth Potential Amid Policy and Market Shifts

- PDF 2024 Semiconductor Spotlight - Moss Adams

- Lisa Su's Campaign to Challenge NVIDIA's AI Dominance

- Tech stocks today: AMD and other tech stocks trade at record highs, Anthropic releases its newest Claude Opus model

- Tech stocks today: Anthropic releases its newest model, Claude Opus 4.7

- Meta Extends Broadcom Deal to Power AI Growth

- CoreWeave and Meta Deepen AI Partnership With $21 Billion Infrastructure Deal

- CoreWeave, Meta strike another US$21 bil deal for AI computing

- Nebius, Meta Agree to $27 Billion AI Infrastructure Pact

- Meta’s 6GW AMD Chip Deal Supercharges AI Compute Race

- Meta, AMD reach deal to expand AI infrastructure - UPI.com

- Meta Signs $100B AI Chip Deal With AMD

- Advanced Micro Devices (AMD) Statistics & Valuation

- AMD Stock Today April 24: Record High Above $300 Fuels AI Rally | Meyka

- At Over $280, Is It Too Late To Buy AMD Stock?

- AMD Announces Major Technology Partnerships at ... - Cloudfront.net

- AMD's Gaming Income Growth Expected to Slow Down: What Lies Ahead? | Bitget News

- AMD Stock Analysis: Anatomy of 235% AI-Driven Rally

- AVGO or AMD: Can These AI Chip Challengers Outrun Nvidia by 2030?

- AMD and INTC Stocks Surge on Announced CPU Price Increases

- Artificial Intelligence In Military Market | Industry Report, 2030

- South Korea AI Chip Market worth $14.68 billion by 2032

- AI Chip Market worth $564.87 billion by 2032 - Exclusive Report by MarketsandMarkets™

- Artificial Intelligence Chip Market Size, Report 2033

- AI Chip Market Size, Share and Industry Trends, 2035

- Artificial Intelligence Chipset Market | Industry Report, 2030

- NVIDIA's SWOT analysis: stock poised for growth amid AI boom, supply challenges By Investing.com

- The AI Chip Revolution: Broadcom vs. Marvell. Who Will Lead the Charge?

- Marvell Technology's SWOT analysis: AI chip leader faces growth challenges By Investing.com

- Are AI Chips the Next Big Investment Opportunity? Discover This Underrated Player!

- AMD Delivers Leadership Portfolio of Data Center AI Solutions ...

- PDF INDUSTRY RESEARCH Launch Report: Advanced Computing

- Accelerating AI and HPC With AMD Instinct™ MI300X

- The ComfyUI Revolution: Comprehensive Analysis & Market Report

- AMD's Strategic Alliances in AI: A Catalyst for Long-Term Growth

- AMD reports fourth-quarter and full year 2024 financial results

- AMD Reports Fourth Quarter and Full Year 2024 Financial Results

- PDF AMD's Software Crisis Analysis - unlockgpu.com

- PDF Accelerate AI Time to Value With AMD Instinct MI300X ... - Oracle

- SK Hynix Shares Outlook, AI Semiconductor Growth, and Global Technology Trends | Trader

- US small caps – Reasons to be cheerful - BNPP AM Hong Kong - EN

- US small caps – Reasons to be cheerful - Malaysia Institutional - BNPP AM

- US small caps – Reasons to be cheerful - BNP Paribas Asset Management - Portugal

- The Kobeissi Letter

- 2026 US Equity Outlook Great Potential

- AI stocks dominate S&P 500 as infrastructure boom pushes weight near 45% - Cryptopolitan

- PDF The AI Infrastructure Investment Shift: From Hype to ROI

- AI Stocks Q1 2026: Tech Shares Fall, Semiconductor & Infrastructure Gain - News and Statistics - IndexBox

- Samsung Electronics Profit Surges as AI Chip Demand Grows

- SOXL Stock Price Prediction 2025: Analyzing the Semiconductor Bull Run

- AMD vs. Intel: Which Stock Wins the Next Phase of the Chip War? | TIKR.com

- Earnings Preview: What to Expect From Advanced Micro Devices' Report

- AMD Price Prediction: Where Will The Stock be in 2027

- AMD Receives Price Target Boost from Mizuho, Rating Maintained |

- New Analyst Forecast: $AMD Given $150.0 Price Target | Nasdaq

- Lobbying Update: $60,000 of ADVANCED MICRO DEVICES (AMD) lobbying was just disclosed | Nasdaq

- Advanced Micro Devices (AMD) Stock Forecast & Price Target

- Edge AI For Real-Time Decision Making

- PDF Be Read in Conjunction With the Section Headed "Warning" on The Cover ...

- Edge AI Market to Witness Strong Demand as Technology Convergence, Secure Infrastructure Needs and Sustainability Goals Accelerate Adoption

- Edge AI Hardware Market Size, Share | Report [2034]

- Top 15 Companies in Global Edge Artificial Intelligence Chips Market

- Top Companies in Edge AI Software Market - Microsoft (US), Google (US), AWS (US), IBM (US) and NVIDIA (US)

- Ai Semiconductor Industry Statistics: Market Data Report 2026

- Exploring Edge AI Solution Market Evolution 2025-2033

- Ultra-low-power Edge AI SoC Market Report:By Key Players, Types, Application, Forecast to 2031

- PDF Health Insurance Artificial Intelligence/Machine Learning Survey ...

- PDF Domain-Specialized Agent Systems in Enterprise AI - Zenodo

- Knowledge Management Software Market Size, Industry Share | Forecast 2034

- Introducing Gemini: The Future of AI

- A Cost-Benefit Analysis of On-Premise Large Language Model Deployment: Breaking Even with Commercial LLM Services

- PDF The State of Sovereign AI

- Small Language Model Market Size, Share and Global Forecast to 2032 | MarketsandMarkets

- Amd Compute for Gpu Computing | Restackio

- Top AI Developer Tools AI Tools (542 Tools) | Creati.ai

- Lamini AI Boosts LLM Memory Accuracy to 95% with Novel Tuning Method - WinBuzzer

- PDF Training LLMs from Scratch_MosaicML.pptx - edX

- PDF O Evaluating and Improving the Efficiency of D Networks

- PDF LLMOPs

- PDF CSET- Re-Shoring Advanced Semiconductor Packaging

- PDF Templates Sample layouts - Stanford University

- PDF The Growth of Advanced Packaging: An Overview of the Latest Technology ...

- PDF Advanced Packaging Technologies and Designs - Department of Energy

- PDF Q1 2026 Revenue

- Techmeme: Source: Alphabet is in talks with Blackstone, KKR, and EQT to give their portfolio companies AI model access, after OpenAI's and Anthropic's JVs with PE firms (The Information)

- Techmeme: CRM software company Freshworks said it plans to cut 11% of its workforce, or ~500 jobs, as it deals with AI's impact; FRSH has dropped ~26% in 2026 (Anhata Rooprai/Reuters)

- Techmeme: Nace.AI, which lets companies build specialized AI models tailored to their business' mission and language, raised a $21.5M seed led by Walden Catalyst (Chris Metinko/Axios)

- AMD Q1 Revenue Rises 38% as Data Center Becomes Core Growth Engine

- PDF Q1 Fiscal 2026 Earnings Presentation - Rockwell Automation