AI-Driven Memory Demand: Unraveling the Supply Chain Impact on Manufacturers

An In-depth Analysis of How Artificial Intelligence Growth Shapes Memory Chip Supply and Pricing

Table of Contents

Executive Summary

This analysis elucidates how the rapid expansion of artificial intelligence, particularly the growth in large language models and inference workloads, has driven unprecedented demand for high-performance memory chips including DRAM, HBM, and NAND flash. The resulting surge has disrupted traditional supply-demand dynamics, causing sharp price increases and significant supply constraints that are reshaping the memory market landscape through at least 2027.

Memory manufacturers are responding with strategic capacity expansions, technological advancements such as the transition from HBM3e to HBM4, and the establishment of long-term supply agreements to mitigate volatility. Despite these efforts, supply chain bottlenecks in raw materials, energy, and packaging components continue to limit scalability. This supply tightness not only elevates costs but also trickles down to impact AI hardware manufacturers and the broader semiconductor ecosystem, influencing product cycles, performance efficiencies, and downstream infrastructure deployment.

Introduction

The accelerating adoption of artificial intelligence technologies has exerted profound pressure on the global memory chip market, a critical segment underpinning AI compute infrastructure. High-performance memory types such as DRAM, High Bandwidth Memory (HBM), and NAND flash are essential for training and deploying increasingly sophisticated AI models, which require vast volumes of fast, reliable memory to manage extensive datasets and persistent context states. This evolving demand paradigm diverges sharply from historical patterns, necessitating a comprehensive analysis of its effects on memory supply chains and market dynamics.

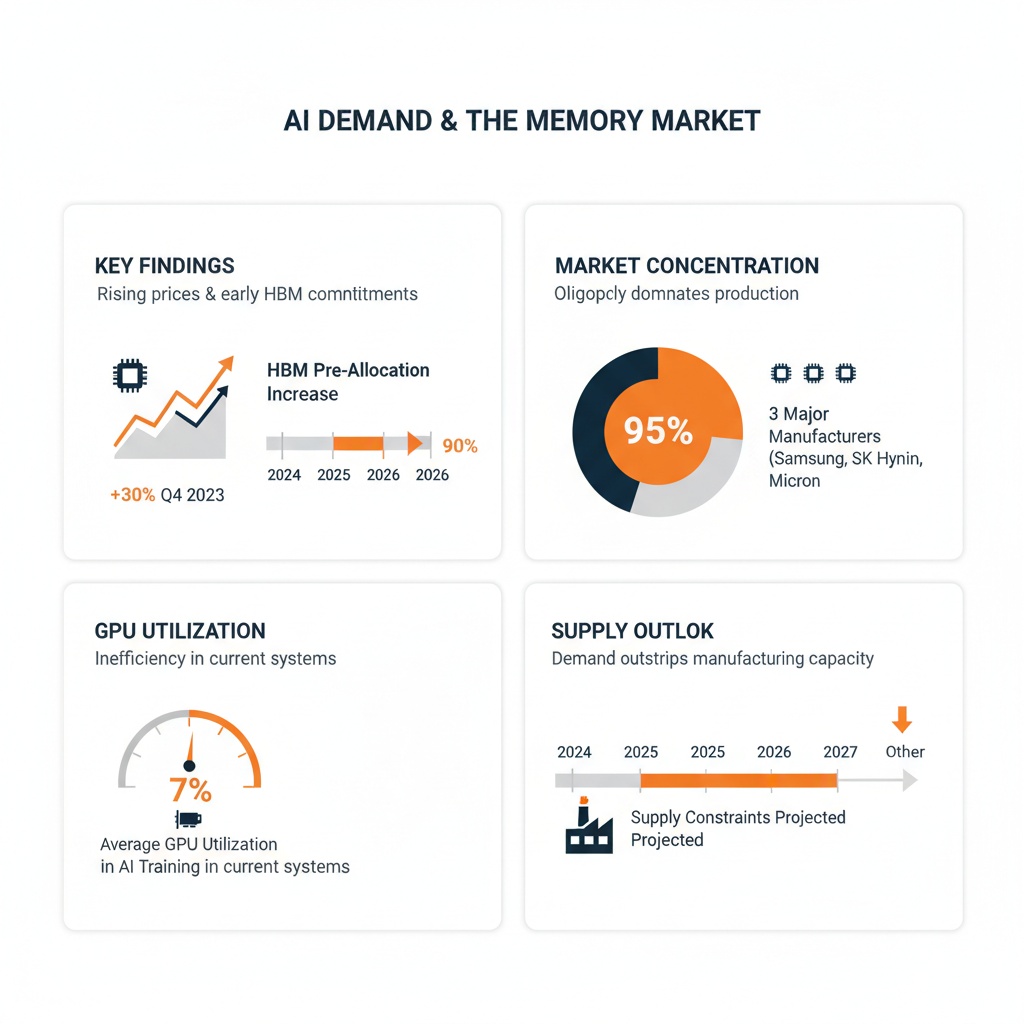

Infographic Image: AI-Driven Memory Market Surge: Supply Challenges and Industry Response

This document aims to analyze the multifaceted impact of AI-driven memory demand on manufacturers, focusing on market price trends, supply limitations, manufacturing capacity challenges, and strategic industry responses. By incorporating quantitative data on price escalations and supply shortages alongside manufacturer investment initiatives and supply chain bottlenecks, the analysis provides a grounded understanding of current constraints and anticipated developments through 2027.

The scope of this analysis encompasses the interplay between AI workload characteristics—distinguishing between training and inference demands—and their influence on memory market segments. It further examines the concentrated nature of memory suppliers and the subsequent fragility within production capacity, alongside the strategic adaptations undertaken by manufacturers to address these pressures. This sets the foundation for assessing downstream implications on AI hardware performance, cost structures, and the broader semiconductor ecosystem.

1. AI Demand Surge and Its Direct Impact on Memory Supply

The rapid proliferation of artificial intelligence (AI) technologies has fundamentally reshaped the landscape of memory chip markets. Driven primarily by the exponential growth in large language models and increasingly sophisticated AI inference workloads, demand for high-performance memory components such as DRAM (Dynamic Random-Access Memory), HBM (High Bandwidth Memory), and NAND flash has surged beyond traditional projections. This unprecedented appetite for memory resources disrupts the conventional supply-demand equilibrium, precipitating acute shortages and price escalations that ripple across the semiconductor ecosystem. Understanding these dynamics is essential for grasping how AI workloads specifically stress memory supply chains and drive market volatility in ways not seen in previous memory demand cycles.

This memory-centric upheaval arises from the unique characteristics of AI compute demands, which differ markedly from traditional computing workloads. AI applications impose continual requirements for vast memory capacity coupled with ultra-high bandwidth and low latency, especially as models grow larger and context sizes expand persistently. Consequently, the memory market, concentrated among a handful of dominant manufacturers, exhibits significant fragility under these stressors. This section dives deeply into quantitative price trends across the DRAM, HBM, and NAND segments, dissects how distinct AI workloads—training versus inference—scale memory needs, and contextualizes the supply footprint and manufacturer concentration that constrain the market's ability to adapt swiftly. This foundation sets the stage for appreciating subsequent strategic industry responses and long-term supply implications.

Quantitative Price Increases and Market Trends for DRAM, HBM, and NAND

Since late 2024, memory chip markets have experienced unprecedented price surges that clearly signal capacity strains linked to AI demand. Contract prices for DRAM, particularly server-grade DDR5, skyrocketed by as much as 172% year-over-year by Q3 2025, with some monthly price jumps nearing or exceeding 100%. Notably, industrial-grade DDR5 prices have nearly doubled within a single year, as highlighted by industry analyses (d3, d6). This dramatic inflation is not limited to DRAM but spans the entire high-performance memory spectrum. HBM, vital for AI accelerator architectures due to its superior bandwidth and low power consumption, faces severe shortages as production prioritizes AI infrastructure needs. Major semiconductor participants report near-complete pre-allocation of HBM capacity through 2026, effectively locking out other market segments (d3, d6). The year-over-year price surge reflects these pressures explicitly, with HBM prices increasing by approximately 150% and NAND prices also rising sharply by around 100% between Q3 2024 and Q3 2025, underscoring the pervasive impact of AI demand across memory types [Chart: Year-Over-Year Price Surge in DRAM, HBM, and NAND].

NAND flash markets, particularly enterprise SSDs employed for AI "warm tier" context memory, are similarly strained. The demand for NAND, driven by the need to store growing volumes of AI conversational histories, embeddings, and persistent state data used during inference, has triggered sharp supply bottlenecks and price hikes. Analysts anticipate NAND prices will remain elevated well into 2026, with some SSD market segments experiencing supply limitations paralleling those in DRAM and HBM (d5, d6). The price trajectory across these memory tiers illustrates a shift from cyclical, modest price fluctuations toward a structural upcycle underpinned by AI-driven demand. This fundamentally alters memory pricing paradigms and signals a prolonged phase of elevated costs and tight supply.

Market data reflecting these trends consistently highlight how AI workloads have imposed a multi-year supercycle on memory prices. By early 2026, leading memory manufacturers cited sustained commitments to HBM and AI-centric DRAM products, confirming that supply will remain constrained through at least 2027. These pricing developments underscore a market imbalance where demand outstrips supply, pushing contract prices to levels surpassing other commodity benchmarks—dramatically impacting downstream industries reliant on memory components.

AI Workloads Driving Memory Demand: Training, Inference, and Persistent Context Size

Crucial to understanding the memory supply shock is the differentiation between AI training and inference workloads, each exerting distinct pressure on memory demand profiles. Training large language models involves massive parallel processing and intense utilization of HBM and high-end DRAM to support extremely high memory bandwidth and low latency for rapid parameter updates. These workloads consume prodigious amounts of memory capacity during relatively concentrated training phases, often involving billions of parameters and requiring high-speed multi-channel memory solutions (d5, d6).

However, the more profound and sustained demand growth emerges from inference workloads. Unlike periodic training, inference represents continuous, large-scale deployment of AI models in production environments that necessitate always-on availability of substantial model state, input data, and prior conversational context. This persistence inflates memory consumption over time, as inference systems maintain increasingly large working memory states and historical data vectors to enhance response accuracy and contextual relevance (d5). Consequently, inference demand scales memory needs beyond mere compute cycles, emphasizing steady, high-volume access to memory resources capable of supporting long context lengths and rapid retrieval.

This persistent context size challenge compels the industry to stratify its memory hierarchy into tiers: ultra-fast HBM for immediate computation, supplementary DRAM for server and system memory, and NAND flash-based enterprise SSD acting as "warm" context memory for relatively slower but cost-effective storage of large AI states (d5). Such a tiered approach addresses the prohibitive cost of scaling all data in HBM by offloading sizable but delay-tolerant AI context data to flash storage, balancing performance with economic viability. This tiering underscores why memory supply shortages manifest not only in premium HBM but ripple downward into DRAM and NAND markets, as demand cascades through the entire memory stack.

Memory Supply Market Size and Manufacturer Concentration: Illustrating Supply Fragility

The memory chip industry’s concentrated structure intensifies the fragility of supply amid surging AI demand. Globally, DRAM and HBM production is dominated by three major manufacturers: Samsung Electronics, SK Hynix, and Micron Technology. Together, these firms control over 95% of the high-performance memory market and possess the specialized, capital-intensive fabrication capabilities required for advanced memory technology nodes (d3, d5). Such concentration means that any strategic shift—such as reallocation of capacity toward HBM for AI accelerators—as seen in recent years, substantially impacts the availability of traditional DRAM and NAND components for other sectors.

Furthermore, the overall volume of memory production remains small relative to the scale of AI-induced demand surges. Unlike more commoditized semiconductor categories, the manufacturing processes for DRAM and especially HBM require specialized wafer-level packaging, vertical stacking, and integration techniques that significantly constrain scalability and ramp times (d7, d8). For example, HBM production entails assembling multiple DRAM dies vertically interconnected by through-silicon vias (TSVs), a complex procedure with inherently lower yield rates and throughput constraints. This technical complexity limits short-term capacity expansion, even as demand skyrockets.

The market's limited number of suppliers also leads to strategic production prioritization. As major players increasingly channel their constrained capacity toward high-margin AI-tailored memory, traditional applications in PCs, consumer electronics, and automotive sectors encounter supply restriction and longer lead times (d3, d6). This re-prioritization exacerbates supply bottlenecks, raising barriers for new entrants and intensifying systemic risks related to geopolitical tensions and supply chain disruptions. Collectively, these factors confirm the memory market’s sensitivity to demand shocks and underscore why AI’s ascending memory requirements have triggered a structural realignment of supply dynamics.

2. Memory Manufacturers’ Response: Supply Chain, Capacity, and Strategic Dynamics

The rapid and sustained surge in demand for high-performance memory chips, propelled by AI workloads, has compelled memory manufacturers to undertake multifaceted strategic responses to mitigate supply constraints and position themselves for long-term growth. This section navigates the complex interplay of capacity expansions, technological transitions, and supply chain challenges that memory producers are facing as they strive to align production capabilities with the intensified, persistent market requirements. Building on the demand realities and pricing pressures outlined previously, the analysis here focuses on how the industry is operationalizing investments and innovations amid bottlenecks that extend beyond mere production lines into raw materials, energy consumption, and packaging components. These factors collectively shape the trajectory of memory availability and cost through at least 2027. Notably, price increases have been steep across key memory components, with server-grade DDR5 DRAM prices rising by 172%, HBM by 150%, and NAND by 100%, underscoring the financial pressures driving these strategic initiatives [Table: Memory Components Price Changes].

Given that memory chips such as DRAM, High Bandwidth Memory (HBM), and NAND flash are foundational to AI-centric infrastructure, their supply-side dynamics are critical determinants of the broader semiconductor ecosystem’s performance and resilience. Manufacturers like Samsung Electronics, SK hynix, and Micron are not only expanding wafer fabrication capacity but also embracing next-generation memory technologies to facilitate higher bandwidth, lower latency, and improved energy efficiency tailored for AI inference and training applications. Concurrently, long-term supply agreements and shifts toward market consolidation are influencing not just availability but pricing stability, which in turn affects downstream AI hardware manufacturers and cloud operators. This section provides a deep dive into these adaptive strategies with an emphasis on manufacturer-specific initiatives, systemic bottlenecks, and the evolving contractual landscape.

Capacity Expansions and Technology Upgrades: Transitioning from HBM3e to HBM4 and Beyond

To address the unprecedented memory demand driven by AI, leading manufacturers are aggressively scaling fabrication capabilities while simultaneously advancing memory technology. Capacity expansions are focused on both scaling existing process nodes for traditional DRAM and NAND products and pioneering new generations of High Bandwidth Memory (HBM)—a critical component for AI accelerators and high-performance computing. Notably, the industry is witnessing a substantive transition from HBM3e to HBM4 technology, which promises significant improvements in memory bandwidth, latency reduction, and energy efficiency tailored for AI inference workloads. As outlined in recent technical assessments (d6, d8), HBM4 integrates architectural innovations such as optimized memory controllers, enhanced interface protocols, and advanced stacking via through-silicon vias (TSVs), enabling vertical integration of multiple memory dies with wider bus widths and reduced signal travel distances. These capabilities directly address latency and power constraints inherent in earlier HBM variants, making HBM4 a pivotal enabler for next-generation AI accelerator platforms.

Samsung and Micron stand at the forefront of HBM4 development, with Samsung's robust vertical integration enabling efficient control over both DRAM fabrication and controller design, affording improvements in bandwidth utilization and thermal management specific to AI applications. SK hynix is concurrently scaling production of HBM3e while investing in HBM4 pilot lines, signaling industry-wide momentum toward adopting these advanced memory stacks. However, technology upgrades do not come without challenges; for instance, integrating HBM4 necessitates not only process node refinement but also enhanced packaging and cooling solutions to manage higher power densities without performance throttling. Additionally, some manufacturers are collaborating with AI chip vendors (e.g., Marvell’s custom HBM compute architecture referenced in d9) to develop specialized memory subsystems optimized for cloud-scale AI accelerators, highlighting a co-design trend between memory providers and compute silicon firms. This synergistic approach accelerates innovation and aligns memory capabilities tightly with evolving AI workload demands.

Beyond HBM4, manufacturers are exploring complementary technology trajectories, including energy-efficient memory access schemes and interface optimizations that enable dynamic bandwidth allocation, traffic shaping, and real-time adaptive controls. These innovations collectively enhance effective memory bandwidth and reduce power consumption per operation—critical factors as AI data centers scale their infrastructure amid increasing energy and operational cost pressures. The transition to advanced memory nodes and stacking also entails significant capital expenditures and yield challenges, underscoring the delicate balance memory makers must maintain between aggressive capacity increases and manufacturing quality assurance.

Supply Chain Bottlenecks: Raw Materials, Energy Constraints, and Packaging Component Shortages

While capacity expansions and technological upgrades are underway, the memory manufacturing supply chain remains stressed by persistent bottlenecks that constrain ramp-up speeds and escalate production costs. These bottlenecks span critical raw materials, including semiconductor-grade silicon wafers, specialty chemicals, and substrates such as T-glass fiberglass cloth used in IC packaging. According to industry reports (d15, d21, d25), scarcity and longer lead times for high-purity materials necessary for advanced node processes are increasingly limiting throughput expansion, even as wafer fabs extend operating hours and add shifts to increase output. Energy consumption poses another complex challenge; large-scale fabrication facilities require vast and reliable energy supplies, but rising electricity costs and sustainability targets have led to operational constraints in some regions, compelling manufacturers to optimize energy efficiency vigorously or seek alternative energy sources.

Packaging and substrate shortages represent a particularly acute bottleneck with multi-layered impacts on memory production cycles. The advanced 2.5D and 3D packaging techniques essential for HBM stacks depend heavily on substrate availability and precision assembly capabilities. Component shortages—encompassing copper foils, organic substrates, and solder materials—complicate package fabrication and test cycles, with capacity limits in specialized assembly plants delaying product shipments. Given that packaging complexity scales with higher memory tiers like HBM4, these supply challenges have direct downstream effects on the availability timeline for newly developed memory products. Moreover, logistics disruptions and geopolitical factors continue to complicate supply chain resilience, occasionally leading to inventory imbalances and reactive pricing adjustments across the memory supply chain.

Manufacturers are responding to these bottlenecks with strategic supplier diversification, vertical integration where feasible, and investments in supply chain digitization and risk management platforms. However, improvements are gradual due to the capital-intensity and long lead times inherent in materials production and fabrication infrastructure. These ongoing constraints reinforce market expectations that supply shortages for high-performance memory types, particularly HBM and advanced DRAM, are likely to persist through 2027, sustaining pricing pressures and catalyzing a reevaluation of supply chain robustness.

Long-Term Supply Agreements and Market Consolidation: Stabilizing Availability Amid Demand Volatility

In response to the volatility and mismatch between surging AI-driven memory demand and constrained supply capacity, memory manufacturers and key consumers increasingly pursue long-term supply agreements as a strategic tool to secure component availability and stabilize pricing structures. Unlike traditional short-term spot purchases that dominated memory markets in prior cycles, these long-term contracts, often spanning multiple years and incorporating volume commitments, provide manufacturers with revenue visibility to support capital investments in capacity and technology upgrades. For hyperscale cloud operators and AI hardware vendors, such agreements ensure prioritized access to scarce memory resources, mitigating procurement risk in a fiercely competitive market environment.

Market consolidation further amplifies the strategic impact of these agreements. With the DRAM manufacturing landscape dominated by a handful of leading players—primarily Samsung Electronics, SK hynix, and Micron—and HBM technologies produced by a subset of these alongside emerging specialized suppliers, the bargaining power of both manufacturers and large-volume consumers has increased. Consolidation has heightened interdependence; on one hand, manufacturers are compelled to optimize production schedules and prioritize high-paying, long-term clients, while customers leverage contractual leverage to negotiate better pricing or guaranteed allocation during constrained supply periods (d34, d36). This dynamic creates a 'two-way squeeze' effect that pressures mid-tier buyers and smaller players, who face risk of limited access and escalating costs.

Furthermore, these long-term arrangements encourage collaborative innovation and co-development efforts. For example, partnerships involving memory producers and major AI silicon firms (e.g., Marvell’s collaboration involving Samsung, SK hynix, and Micron as detailed in d9) exemplify a shift toward integrated supply chain ecosystems rather than transactional supplier relationships. Such collaborations enable tailored memory solutions optimized for specific AI workloads, enhanced packaging and thermal designs, and implementation of energy-efficient memory subsystems. However, with suppliers prioritizing these strategic accounts, the broader market may encounter segmented availability profiles—with flagship customers benefiting from the latest technologies and capacity expansions first, while other market segments adapt to stretched supply conditions.

This consolidation and contract-driven approach to supply management also introduces complexity in pricing dynamics. While manufacturers aim for price stability through binding agreements, the ongoing imbalance between supply and AI-driven demand sustains upward pressure on prices. Experts forecast that this environment will persist through 2027, requiring continuous investment and strategic alignment across the value chain to enable greater supply elasticity and long-term market stabilization.

3. Broader Implications for AI Manufacturers and Infrastructure Ecosystem

The ongoing memory supply constraints ripple far beyond chip manufacturers and markets, deeply affecting AI hardware producers and the broader infrastructure ecosystem responsible for powering AI workloads. While Sections 1 and 2 have laid out the pressures on memory supply and fabrication capacity, the consequential operational impact on AI manufacturers—who rely heavily on high-performance DRAM, HBM, and NAND flash memory—is profound and multifaceted. These repercussions underscore the systemic vulnerabilities inherent in AI infrastructure, revealing bottlenecks not only in hardware acquisition but also in performance efficiency, cost management, product innovation, and even in interrelated semiconductor component availability.

Transitioning from supply-side challenges to end-user impacts, understanding these downstream effects is critical in capturing the full supply-demand-impact cycle. AI equipment manufacturers are forced to grapple with memory shortages that affect GPU server utilisation and throughput, compel re-engineering of product lifecycles, and induce strategic shifts in sourcing and design. Furthermore, memory constraints intersect with broader semiconductor shortages—such as CPUs and substrates—exacerbating hardware procurement difficulties and amplifying the complexity of ecosystem-wide resource allocation. This section explores these critical dimensions, elucidating the operational challenges and strategic adaptations shaping the AI infrastructure landscape in 2026 and beyond.

Impact on GPU Server Performance and Hardware Utilization

AI hardware performance, particularly in GPU-accelerated servers, is intimately tied to the availability and bandwidth of memory subsystems. Contemporary AI training and inference models rely on massive datasets and high-speed data exchanges between processors and memory, making memory shortages a direct impediment to optimal utilization. According to evidence presented in reference documents (d3, d9, d20), memory bottlenecks manifest as lower GPU utilization rates, with reported figures indicating that only approximately 7% of AI teams achieve GPU utilizations above 85% at peak workloads. This underutilization primarily stems from insufficient memory bandwidth to keep pace with GPU processing speeds, causing the GPUs to stall and await data. Such inefficiencies not only degrade throughput but increase the total cost of ownership as expensive GPU resources remain idle for significant portions of operational time.

Storage feeding GPUs emerges as a concurrent bottleneck. High-performance AI workloads demand swift and reliable data pipelines from storage through memory to compute units. When memory capacity and speed limitations compound with storage latency or bandwidth challenges, the overall performance cascade intensifies. For instance, multi-GPU server clusters often employ hybrid storage architectures combining local NVMe SSDs with shared storage systems tailored to specific workload phases like checkpointing and dataset staging (d9). Yet, persistent memory scarcity constrains the provisioning of local high-bandwidth memory solutions such as HBM3, limiting the ability to optimize these architectures. Consequently, AI infrastructure providers face an operational paradox: augmenting computational power without matching memory provisioning results in diminishing returns on hardware investments.

Moreover, these utilization inefficiencies create ripple effects on deployment timelines and service-level agreements (SLAs) within data centers. The delay in achieving expected processing throughput may yield longer model training cycles and increased inference latencies. Industry practitioners report that the inability to fully saturate GPU resources due to memory shortages slows iterative AI experimentation, reducing innovation velocity. This bottleneck further incentivizes manufacturers and integrators to reevaluate architectural designs, emphasizing balanced component provisioning and reconsidering memory hierarchies to mitigate underperformance risks.

Cost Structures, Product Cycles, and Strategic Adjustments in AI Equipment Manufacturing

Memory supply scarcity introduces significant volatility and upward pressure on the cost structures of AI hardware manufacturers. The necessity to source limited DRAM, HBM, and NAND flash chips at premium prices inflates Bill of Materials (BOM) costs, which in turn compress profit margins or necessitate higher end-product pricing, threatening demand elasticity. As documented in reference d24, manufacturers are accelerating product cycles and prioritizing higher-margin, performance-dense models to offset rising component costs. This strategic shift reflects a dual response to market pressures: reducing exposure to supply chain volatility by shortening inventory turns and capitalizing on the increasing preference among AI customers for premium, memory-intensive architectures that deliver superior computational density.

This dynamic also fosters innovation in memory-efficient AI hardware design. Suppliers are investing in product lines that optimize memory bandwidth utilization, compression algorithms, and novel memory hierarchies, aiming to extract maximum throughput per byte of memory. However, such innovations are not immediate panaceas; implementing new memory architectures or novel compression techniques requires extensive validation and could temporarily elongate time-to-market for new equipment generations. The pressure to maintain agility while managing extended development cycles due to component scarcity introduces complex trade-offs for product management teams.

Furthermore, strategic adjustments include an increased reliance on long-term supplier relationships and contractual commitments to secure memory supply amid market tightness. This shift from spot procurement toward contractual stability reflects an elevated risk posture, where supply assurance is valued over short-term cost advantages. Consequently, manufacturers may invest in dual-sourcing strategies and partnerships aiming to diversify exposure to memory supply shocks. Additionally, the integration of memory supply prognostics into product roadmaps becomes a critical planning component, ensuring that forthcoming product launches can be supported by adequate memory availability to meet market demand.

These developments collectively impact the overall AI equipment ecosystem, as downstream customers—including cloud service providers and large enterprises—must absorb cost increases or accept adjusted delivery schedules. The ecosystem’s iterative feedback loop is thus influenced not only by technology evolution but also by supply chain resilience and economic considerations borne out of memory scarcity. Market concentration further compounds these risks; notably, Samsung Electronics, SK Hynix, and Micron Technology collectively control 95% of the high-performance memory market as of 2026, indicating a fragile supply landscape where disruptions among these major players could have outsized ripple effects for AI manufacturers and their cost structures [Chart: Market Share of Leading Memory Manufacturers].

Interdependencies Between Memory Supply Constraints and Broader Semiconductor Shortages

The memory scarcity phenomenon does not exist in isolation; it is tightly coupled with broader semiconductor shortages, notably affecting CPUs and substrate materials essential for AI hardware manufacturing. As highlighted in reference d3, the industry currently confronts an acute CPU shortage exacerbated by limited production capacity, with Intel’s 18A lithography process still scaling yields to meet surging demand driven by AI datacenter expansions. Limited CPU availability directly influences AI server production schedules, compounding the effects of constrained memory supply by restricting the volume of fully integrated systems that can be shipped timely.

Similarly, substrate shortages—a critical and often underemphasized supply chain bottleneck—impede the assembly process of both memory and logic chips. Substrates serve as the physical foundation upon which semiconductor dies are packaged, and shortages here delay overall chip availability regardless of silicon wafer readiness. This interconnectedness means that even if memory manufacturers succeed in scaling capacity, downstream hardware assemblers may face continued delays in final system delivery due to substrate and peripheral component scarcities, affecting GPUs, CPUs, and associated AI accelerators alike.

These cross-component supply pressures necessitate coordinated ecosystem strategies involving semiconductor foundries, packaging suppliers, and AI hardware original equipment manufacturers (OEMs). For AI infrastructure builders and cloud providers, managing procurement becomes a multilayered challenge requiring synchronized forecasting across diverse semiconductor product lines to prevent cascading production delays. This complexity elevates operational risks and underscores the vulnerability of the AI infrastructure supply chain to single-node disruptions.

In practical terms, the confluence of memory, CPU, and substrate shortages narrows the availability window for AI hardware and escalates lead times. Businesses dependent on these systems must adopt inventory management agility, long-range procurement planning, and potentially reevaluate architectural choices to mitigate compound shortages. Additionally, this environment fuels interest in alternative compute paradigms that may reduce dependence on scarce components, such as emerging chiplet architectures and heterogeneous integration approaches, although widespread adoption remains on a longer horizon.

Conclusion

The sustained and multifaceted demand surge from AI applications has introduced a structural realignment in the memory chip market, characterized by intensified supply constraints and elevated pricing levels that are projected to persist through at least 2027. Despite concerted efforts by key manufacturers to scale capacity, advance memory technologies, and establish long-term contractual frameworks, systemic bottlenecks in raw materials, energy availability, and specialized packaging limit rapid supply expansion and market stabilization.

These dynamics carry significant implications for AI hardware producers and the broader infrastructure ecosystem. Memory shortages contribute to suboptimal GPU utilization, increased production costs, and strategic shifts in product development cycles, thereby influencing the pace and economics of AI innovation. Moreover, the compounding effects of concurrent semiconductor shortages, such as CPUs and substrates, underscore the complexity of supply chain management in this sector.

Looking forward, continued monitoring of supply-demand trends, coupled with coordinated investment in manufacturing innovation and supply chain resilience, will be essential. Further analysis is warranted to explore emerging memory technologies, evolving contractual frameworks, and potential alternative compute architectures that may alleviate supply pressures and support sustainable AI growth in the longer term.

Glossary

- DRAM (Dynamic Random-Access Memory): A type of high-speed volatile memory used widely in computers and servers that stores each bit of data in a separate capacitor within an integrated circuit.

- HBM (High Bandwidth Memory): An advanced memory technology featuring vertically stacked memory dies interconnected by through-silicon vias (TSVs), providing very high bandwidth and low power consumption, crucial for AI accelerators and high-performance computing.

- NAND Flash: A type of non-volatile storage memory used in solid-state drives (SSDs) and other storage devices, offering high storage density and persistence, commonly used for storing AI model context data and conversational histories.

- AI Inference Workloads: Processes where trained AI models are actively deployed to analyze new input data and generate outputs, requiring continuous, large-scale memory access with substantial context size for sustained operation.

- AI Training Workloads: Computationally intensive phases where AI models learn from vast datasets by adjusting billions of parameters, typically needing extremely high memory bandwidth and capacity in short, concentrated bursts.

- Memory Capacity: The total amount of data storage available in a memory system, determining how much information AI workloads can handle simultaneously.

- Memory Bandwidth: The rate at which data can be read from or written to memory by a processor, critical for performance in AI applications requiring rapid data movement.

- Through-Silicon Vias (TSVs): Vertical electrical connections that pass through silicon dies in stacked memory modules like HBM, enabling fast communication with reduced latency.

- Long-Term Supply Agreements: Contracts between memory manufacturers and buyers that secure memory chip supply over multiple years, ensuring availability and price stability amid volatile demand.

- Memory Hierarchy: A structured organization of memory types, from ultra-fast but costly options like HBM to slower but cheaper storage like NAND flash, balancing performance and cost for AI workloads.

- Memory Market Concentration: The dominance of a few key manufacturers (such as Samsung Electronics, SK hynix, and Micron Technology) controlling the majority of the high-performance memory supply.

- Memory Supply Bottlenecks: Constraints in producing or delivering memory chips caused by shortages in raw materials, energy, packaging components, or manufacturing capacity that limit market supply.

- HBM3e and HBM4: Successive generations of High Bandwidth Memory technologies, with HBM4 offering improvements in bandwidth, energy efficiency, and latency over HBM3e, designed to better support AI inference workloads.

- Bill of Materials (BOM): The detailed list of components and their costs required to manufacture AI hardware, heavily influenced by changing prices and availability of memory chips.

- GPU Utilization: A measure of how effectively Graphics Processing Units are used during AI computations, influenced by memory availability and bandwidth to prevent processor stalls.

References

- Marvell Announces Breakthrough Custom HBM Compute Architecture to Optimize Cloud AI Accelerators

- AI growth no longer limited by models, but by computing power: Goldman Sachs

- CPUs reclaim the core of AI architecture as multicore trend tightens substrate supply

- PC chipmakers warn CPU shortages cloud 2026 shipment outlook

- 반도체의 수요와 공급 이해하기_메모 | Valley AI

- Equity Insight: Memory - The Hidden Bottleneck Behind the AI Boom | Investment Insights

- Industry faces "acute" CPU shortage with hope that Intel 18A yields improve - OC3D

- AI Servers in 2025: What Hardware is Needed to Run LLMs and Neural Networks? - Unihost.com Blog

- How AI Forces Are Spurring a Memory Chip Shortage - Z2Data

- Rising component costs push Chinese phone makers to speed product cycles and favor high-end models

- AI server demand locks up memory supply through 2027, prices seen holding firm

- AI PCB supply crunch squeezes margins on rising materials and energy costs

- How HBM4 Improves Latency And Energy Efficiency For AI Inference?

- How Your Storage Feeds Your GPUs is the Real AI Bottleneck | Exxact Blog

- HBM·D램 최소 3년간 품귀 … '장기 호황' 날개 단 하이닉스 - 매일경제

- Samsung and SK Hynix race to upgrade China chip plants as NAND demand surges

- GDDR6 vs HBM - Different GPU Memory Types | Exxact Blog

- 삼성·SK 보유자 필수 체크… AI 슈퍼사이클 '끝물' 경고신호 3 - 글로벌이코노믹

- Memory Prices Soar Higher Than Gold: AI Demand Sparks Industrial DRAM Shortage - Blog - Forlinx Embedded Technology Co., Ltd.

- How HBM4 Improves Bandwidth Utilization In GPU Architectures?